LLMs demonstrate emerging intelligence with increased parameters, calculations, and data, alluding to artificial general intelligence. Despite progress, deployed LLMs still exhibit errors such as hallucinations, biases, and factual inaccuracies. Also, the constant evolution of knowledge calls into question their preformation. It is crucial to quickly remedy errors during deployment, as retraining or fine-tuning is often prohibitively expensive, posing sustainability challenges to accommodate lifelong knowledge growth .

While long-term memory can be updated through (re)pretraining, fine-tuning, and model editing, working memory facilitates inference, enhanced by methods like GRACE. However, debates persist over the effectiveness of fine-tuning or recovery. Current knowledge injection methods face challenges such as computational overhead and overfitting. Model editing techniques, including constrained fine-tuning and meta-learning, aim to efficiently edit LLMs. Recent advances focus on permanent editing, but require extensive domain-specific training, which poses challenges in predicting upcoming edits and accessing relevant data.

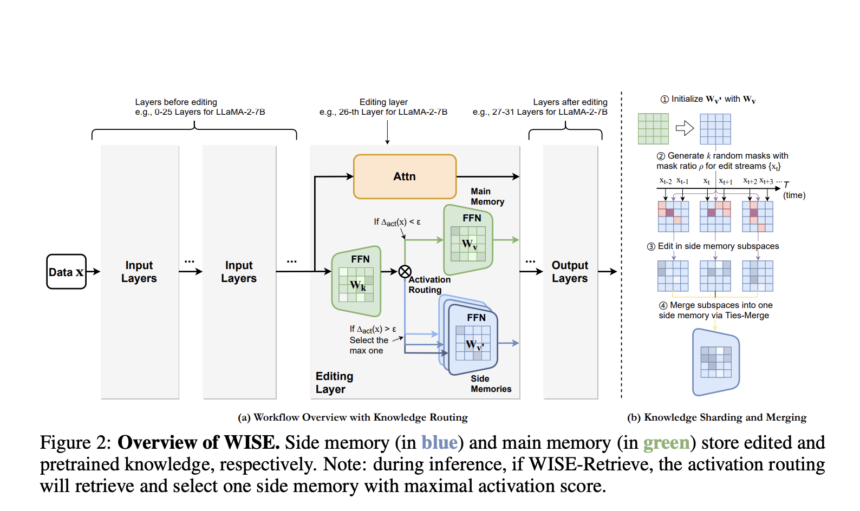

After in-depth study of the above questions and approaches, researchers from Zhejiang University and Alibaba Group propose their method: WISE, a dual parametric memory system, including a primary memory for pre-trained knowledge and a secondary memory for edited knowledge. Only side memory undergoes changes, with a router determining which memory to access for queries. For continuous editing, WISE uses a knowledge sharing mechanism, separating edits into distinct parameter subspaces to avoid conflicts before merging them into shared memory.

WISE includes two main components: secondary memory design and knowledge sharing and fusion. The first involves a secondary memory, initialized as a copy of some FFN layer of the LLM, storing changes and a routing mechanism for memory selection during inference. The latter uses knowledge sharing to divide edits into random subspaces for editing and knowledge fusion techniques to combine these subspaces into a unified secondary memory. Additionally, WISE introduces WISE-Retrieve, enabling retrieval among multiple secondary memories based on activation scores, improving lifetime editing scenarios.

WISE demonstrates superior performance over existing methods in QA and hallucination contexts. It outperforms its competitors, especially in long editing sequences, achieving significant improvements in stability and handling sequential edits effectively. Although methods like MEND and ROME are competitive initially, they weaken as assembly sequences become longer. Direct editing of long-term memory results in significant declines in locality, which impairs generalizability. GRACE excels at locality but sacrifices generalizability in continuous editing. WISE achieves a balance between reliability, generalization, and locality, outperforming benchmarks in various tasks. In non-distribution evaluation, WISE exhibits excellent generalization performance, outperforming other methods.

This research identifies the challenge of simultaneously achieving reliability, generalizability, and locality in current modeling editing approaches across the lifespan, attributing it to the gap between working memory and memory. long term. To overcome this problem, WISE is proposed, including secondary memory and model fusion techniques. The results indicate that WISE shows promise in simultaneously achieving high metrics on various datasets and LLM models.

Check Paper. All credit for this research goes to the researchers of this project. Also don't forget to follow us on Twitter. Join our Telegram channel, Discord ChannelAnd LinkedIn Groops.

If you like our work, you will love our bulletin..

Don't forget to join our 42,000+ ML subreddit