Large language models (LLMs) such as ChatGPT have attracted much attention because they can perform a wide range of activities, including language processing, knowledge extraction, reasoning, planning, coding, and use of tools. These capabilities have sparked research aimed at creating even more sophisticated AI models and hint at the possibility of artificial general intelligence (AGI).

The Transformer neural network architecture, on which LLMs are based, uses autoregressive learning to anticipate which word will appear next in a series. The success of this architecture in carrying out a wide range of intelligent activities raises the fundamental question of why predicting the next word in a sequence leads to such high levels of intelligence.

Researchers have looked into various topics to better understand the power of LLMs. In particular, the planning ability of LLMs has been studied in recent work, which constitutes an important part of human intelligence engaged in tasks such as organizing projects, planning trips, and proving mathematical theorems. Researchers want to bridge the gap between basic next-word prediction and more sophisticated intelligent behaviors by understanding how LLMs perform planning tasks.

In recent research, a team of researchers presented the results of the ALPINE project which stands for “Autoregressive Learning for Planning In NEtworks”. The research examines how the autoregressive learning mechanisms of Transformer-based language models enable the development of planning capabilities. The team's goal is to identify possible gaps in the planning capabilities of these models.

The team defined planning as a network pathfinding task to explore this. Creating a legitimate path from a given source node to a selected target node is the goal in this case. The results demonstrated that Transformers, by integrating adjacency and reachability matrices into their weights, are capable of performing path-finding tasks.

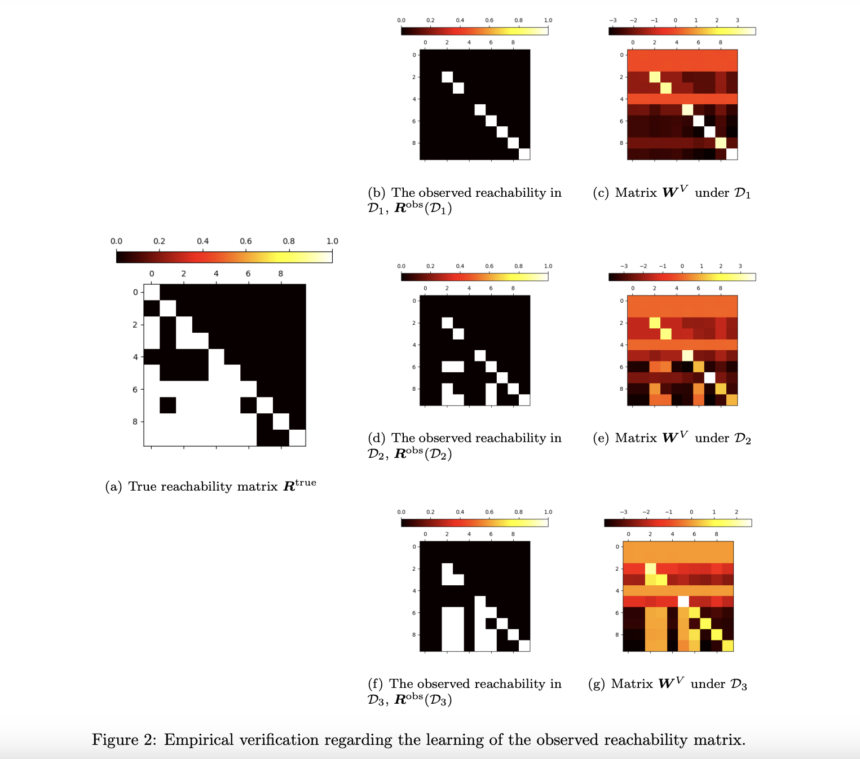

The team theoretically studied the gradient-based learning dynamics of Transformers. According to this, Transformers are able to learn both a condensed version of the reachability matrix and the adjacency matrix. Experiments were conducted to validate these theoretical ideas, demonstrating that Transformers can learn both an incomplete reachability matrix and an adjacency matrix. The team also used Blocksworld, a real-world planning benchmark, to apply this methodology. The results supported the main conclusions, indicating the applicability of the methodology.

The study highlighted a potential disadvantage of Transformers in pathfinding, namely their inability to recognize reachability links via transitivity. This implies that they would not work in situations where creating a full path requires path concatenation, i.e. the transformers might not be able to correctly produce the correct path if the path involves consideration of connections that span multiple intermediate nodes.

The team summarized its main contributions as follows,

- An analysis of the path planning tasks of Transformers using autoregressive learning in theory was conducted.

- The ability of transformers to extract adjacency and partial accessibility information and produce legitimate pathways was empirically validated.

- The inability of Transformers to fully understand transitive accessibility interactions has been highlighted.

In conclusion, this research sheds light on the fundamental workings of autoregressive learning, which facilitates network design. This study expands knowledge of the general planning capabilities of Transformer models and may assist in the creation of more sophisticated AI systems capable of handling difficult planning tasks across a range of industries.

Check Paper. All credit for this research goes to the researchers of this project. Also don't forget to follow us on Twitter. Join our Telegram channel, Discord ChannelAnd LinkedIn Groops.

If you like our work, you will love our bulletin..

Don't forget to join our 42,000+ ML subreddit

Tanya Malhotra is a final year undergraduate from University of Petroleum and Energy Studies, Dehradun, pursuing BTech in Computer Engineering with specialization in Artificial Intelligence and Machine Learning.

She is passionate about data science, with good analytical and critical thinking, as well as a keen interest in learning new skills, leading groups and managing work in an organized manner.