Amazon Titan Prime Textthe latest addition to the Amazon Titan family of Large Language Models (LLMs), is now generally available in Amazonian substrate. Amazon Bedrock is a fully managed service that offers a choice of high-performance Core Models (FM) from leading artificial intelligence (AI) companies such as AI21 Labs, Anthropic, Cohere, Meta, Stability AI, and Amazon through a single API , as well as a wide range of features to create generative AI applications with security, privacy and responsible AI.

Amazon Titan Text Premier is an advanced, high-performance, cost-effective LLM designed to deliver superior performance for enterprise text generation applications, including optimized performance for retrieval augmented generation (RAG) and agents. The model is built from the ground up with safe, secure, and trustworthy responsible AI practices, and excels at delivering exceptional generative AI text capabilities at scale.

Exclusive to Amazon Bedrock, Amazon Titan Text models support a wide range of text-related tasks, including summarization, text generation, classification, question answering, and information extraction. With Amazon Titan Text Premier, you can access new levels of efficiency and productivity for your text generation needs.

In this article, we explore building and deploying two sample applications powered by Amazon Titan Text Premier. To accelerate development and deployment, we use open source AWS Generative AI CDK Builds (spear by Werner Vogels at AWS re:Invent 2023). AWS Cloud SDK (AWS CDK) accelerates application development by providing developers with reusable infrastructure templates that you can seamlessly integrate into your applications, allowing you to focus on what makes your application different.

Document Explorer sample application

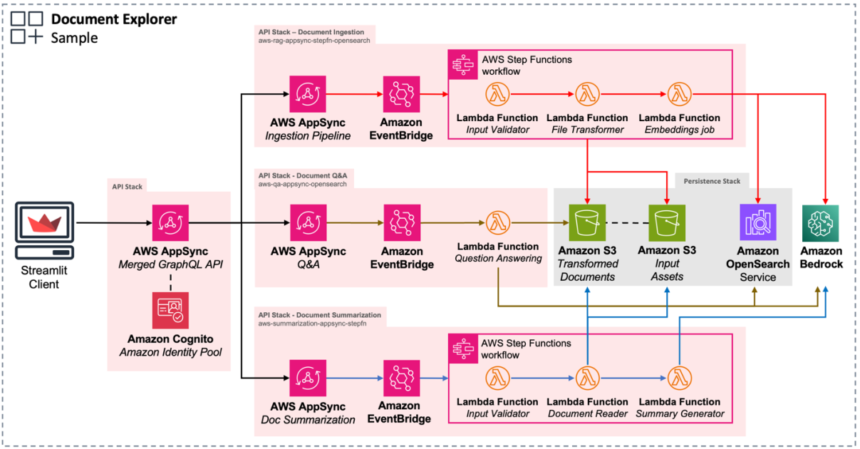

THE Sample Document Explorer generative AI application can help you quickly understand how to build end-to-end Generative AI applications on AWS. It includes examples of key components needed for generative AI applications, such as:

- Data ingestion pipeline – Ingests documents, converts them to text and stores them in a knowledge base for retrieval. This allows use cases like RAG to tailor generative AI applications to your data.

- Summary of the document – Summarizes PDF documents using Amazon Titan Premier via Amazon Bedrock.

- Answer to questions – Answers questions in natural language by retrieving relevant documents from the knowledge base and using LLMs like Amazon Titan Premier through Amazon Bedrock.

Follow the steps in the READ ME to clone and deploy the application in your account. The application deploys all required infrastructure, as shown in the following architecture diagram.

After deploying the application, upload a sample PDF file to the entry Amazon Simple Storage Service (Amazon S3) by choosing Select a document in the navigation pane. For example, you can download Amazon annual letters to shareholders from 1997 to 2023 and download it using the web interface. On the Amazon S3 console, you can see that the files you uploaded are now in the S3 bucket whose name begins with persistencestack-inputassets.

After downloading a file, open a document to see it displayed in the browser.

Choose Questions and answers in the navigation pane and choose your preferred model (for this example, Amazon Titan Premier). You can now ask a question about the document you downloaded.

The following diagram illustrates an example workflow in Document Explorer.

Don't forget to delete the AWS Cloud Training batteries to avoid unexpected charges. First make sure to delete all data from S3 buckets, especially anything in buckets whose name starts with persistencestack. Then run the following command from a terminal:

Amazon Bedrock Agent and Custom Knowledge Base Application Example

THE Amazon Bedrock Agent and Custom Knowledge Base Generative AI Application Examplen is a chat assistant designed to answer questions about literature using RAG from a selection of Project Gutenberg books.

This application deploys an Amazon Bedrock agent that can consult an Amazon Bedrock knowledge base backed by Amazon OpenSearch serverless as a vector store. An S3 bucket is created to store the knowledge base books.

Follow the steps in the READ ME to clone the sample app into your account. The following diagram illustrates the architecture of the deployed solution.

Update the deposit define the foundation template to use when creating the agent:

Follow the steps in the READ ME to deploy the sample code into your account and ingest the sample documents.

Access the Agents on the Amazon Bedrock console in your AWS Region and find your newly created agent. THE AgentId can be found in the Outputs section of the CloudFormation stack.

You can now ask some questions. You may need to tell the agent which book you want to ask about or refresh the session when asking about different books. Here are some examples of questions you can ask:

- What are the most popular books in the library?

- Who is Mr. Bingley really infatuated with at the Meryton ball?

The following screenshot shows an example of the workflow.

Don't forget to delete the CloudFormation stack to avoid unexpected charges. Delete all data from S3 buckets, then run the following command from a terminal:

Conclusion

Amazon Titan Text Premier is available today in the US East (N. Virginia) region. Amazon Titan Text Premier custom tuning is also available in preview today in the US East (N. Virginia) region. Check the full list of regions for future updates.

To learn more about the Amazon Titan family of models, visit Amazon Titan product page. For more pricing details, see Amazon Bedrock Pricing. Visit the AWS Generative AI CDK builds GitHub repository for more details on available builds and additional documentation. For practical examples to get started, check out the AWS Sample Repository.

About the authors

Alain Krok is a Senior Solutions Architect passionate about emerging technologies. His past experience includes designing and implementing IIoT solutions for the oil and gas industry and working on robotics projects. He enjoys pushing boundaries and engaging in extreme sports when he's not designing software.

Alain Krok is a Senior Solutions Architect passionate about emerging technologies. His past experience includes designing and implementing IIoT solutions for the oil and gas industry and working on robotics projects. He enjoys pushing boundaries and engaging in extreme sports when he's not designing software.

Laith Al-Saadoon is a Principal Prototyping Architect on the Prototyping and Cloud Engineering (PACE) team. He builds prototypes and solutions using generative AI, machine learning, data analytics, IoT and edge computing, and full-stack development to solve real-world customer challenges. In his free time, Laith enjoys the outdoors: fishing, photography, drone flying, and hiking.

Laith Al-Saadoon is a Principal Prototyping Architect on the Prototyping and Cloud Engineering (PACE) team. He builds prototypes and solutions using generative AI, machine learning, data analytics, IoT and edge computing, and full-stack development to solve real-world customer challenges. In his free time, Laith enjoys the outdoors: fishing, photography, drone flying, and hiking.

Justin Lewis leads the Emerging Technologies Accelerator at AWS. Justin and his team help clients leverage emerging technologies like generative AI by providing open source software examples to inspire their own innovation. He lives in the San Francisco Bay Area with his wife and son.

Justin Lewis leads the Emerging Technologies Accelerator at AWS. Justin and his team help clients leverage emerging technologies like generative AI by providing open source software examples to inspire their own innovation. He lives in the San Francisco Bay Area with his wife and son.

Anupam Dewan is a Senior Solutions Architect passionate about Generative AI and its real-life applications. He and his team help Amazon Builders who create customer-facing applications using generative AI. He lives in the Seattle area, and outside of work, he loves hiking and enjoying nature.

Anupam Dewan is a Senior Solutions Architect passionate about Generative AI and its real-life applications. He and his team help Amazon Builders who create customer-facing applications using generative AI. He lives in the Seattle area, and outside of work, he loves hiking and enjoying nature.