Large language models (LLMs) have shown promise in powering autonomous agents that control computer interfaces to accomplish human tasks. However, without fine-tuning human-collected task demonstrations, the performance of these agents remains relatively low. A major challenge lies in developing viable approaches to creating real-world computer control agents capable of efficiently executing complex tasks in various applications and environments. Current methodologies, which rely on pre-trained LLMs without task-specific adjustment, have achieved only limited success, with reported task success rates ranging from 12% to 46% in recent studies.

Previous attempts to develop computational control agents have explored various approaches, including zero- or few-shot prompting of large language models, as well as fine-tuning techniques. Zero-shot prompting methods use pre-trained LLMs without any task-specific fine-tuning, while few-shot approaches provide a small number of examples to the LLM. Fine-tuning methods involve further training of the LLM on task demonstrations, either end-to-end or for specific functionality such as identifying interactive user interface elements. Notable examples include SeeAct, WebGPT, WebAgent and Synapse. However, these existing methods have limitations in terms of performance, domain generalization, or task complexity that they can handle effectively.

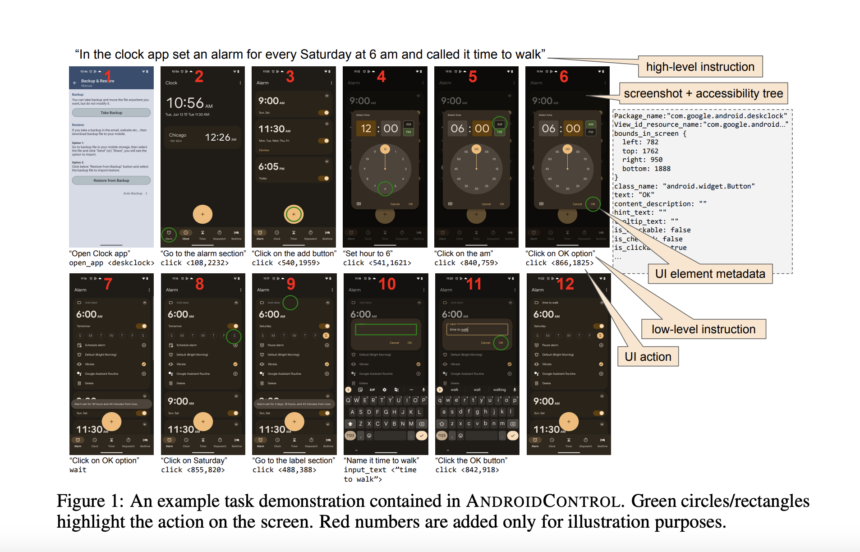

Google DeepMind and Google researchers present ANDROID CONTROL, a large-scale dataset of 15,283 human demonstrations of tasks performed in Android apps. A key feature of ANDROIDCONTROL is that it provides high-level and low-level human-generated instructions for each task, allowing investigation of the levels of task complexity that models can handle while providing supervision richer during training. Additionally, this is the most diverse UI control dataset to date, comprising 15,283 unique tasks spread across 833 different Android apps. This diversity allows the generation of multiple test splits to measure performance both within and outside the task domain covered by the training data. The proposed method involves using ANDROIDCONTROL to quantify how fine-tuning scales when applied to low- and high-level tasks, both in-domain and out-of-domain, and comparing the Fine-tuning approaches with various zero baselines and few hits. .

The ANDROIDCONTROL dataset was collected over a year using crowdsourcing. Crowdworkers were given generic feature descriptions for apps from 40 different categories and asked to instantiate them in specific tasks involving the apps of their choice. This approach led to the collection of 15,283 task demos covering 833 Android apps, including popular apps as well as less popular or regional apps. For each task, the annotators first provided a high-level description in natural language. Then, they performed the task on a physical Android device, with their actions and associated screenshots captured. Importantly, the annotators also provided low-level natural language descriptions of each action before executing it. The resulting dataset contains both high-level and low-level instructions for each task, allowing the analysis of different levels of task complexity. Careful divisions of the datasets were created to measure in-domain and out-of-domain performance.

Results show that for evaluation in the IDD subset domain, LoRA-optimized models outperform zero-shot and few-shot methods when trained with sufficient data, despite using the smallest model PaLM 2S. Even with only 5 training episodes (LT-5), the LoRA tuning outperforms all untuned models on low-level instructions. For high-level instruction, 1,000 episodes are required. The best LoRA-optimized model achieves 71.5% accuracy on high-level instructions and 86.6% on low-level instructions. Among the no-fire methods, AitW with PaLM 2L performs best (56.7%) on low-level instructions, while M3A with GPT-4 performs best (42.1%) on high-level instructions. likely benefiting from the integration of high-level reasoning. Surprisingly, performance with few shots is generally lower than with zero shots across the board. The results highlight the important benefits of fine-tuning in the field, especially for more data.

This work introduced ANDROIDCONTROL, a large and diverse dataset designed to study model performance on low- and high-level tasks, both in-domain and out-of-domain, as training data is put into practice. At scale. Through evaluation of fine-tuned LoRA models on this dataset, it is predicted that achieving 95% accuracy on low-level tasks in the domain would require approximately 1 million training episodes, while a rate Episode completion rate of 95% on high-level tasks in 5 steps. -domain tasks would require around 2 million episodes. These results suggest that, although potentially costly, fine-tuning may be a viable approach to achieving high domain performance in complex tasks. However, out-of-domain performance requires one to two orders of magnitude more data, indicating that fine-tuning alone may not scale well and that additional approaches may be beneficial, particularly for robust performance on tasks high level outside the domain.

Check Paper. All credit for this research goes to the researchers of this project. Also don’t forget to follow us on Twitter. Join our Telegram channel, Discord ChannelAnd LinkedIn Groops.

If you like our work, you will love our bulletin..

Don't forget to join our 44,000+ ML subreddit