The vulnerability of AI systems, particularly large language models (LLMs) and multimodal models, to adversarial attacks can lead to harmful outcomes. These models are designed to provide assistance and provide useful answers, but adversaries can manipulate them to produce undesirable or even dangerous results. The attacks exploit inherent weaknesses in the models, raising concerns about their security and reliability. Existing defenses, such as denial training and adversarial training, have significant limitations, often compromising model performance without effectively preventing harmful outcomes.

Current methods to improve AI model alignment and robustness include denial training and adversarial training. Denial training teaches models to reject harmful prompts, but sophisticated adversarial attacks often circumvent these safeguards. Adversarial training involves exposing models to adversarial examples during training to improve robustness, but this method tends to fail in the face of new, unseen attacks and can degrade model performance.

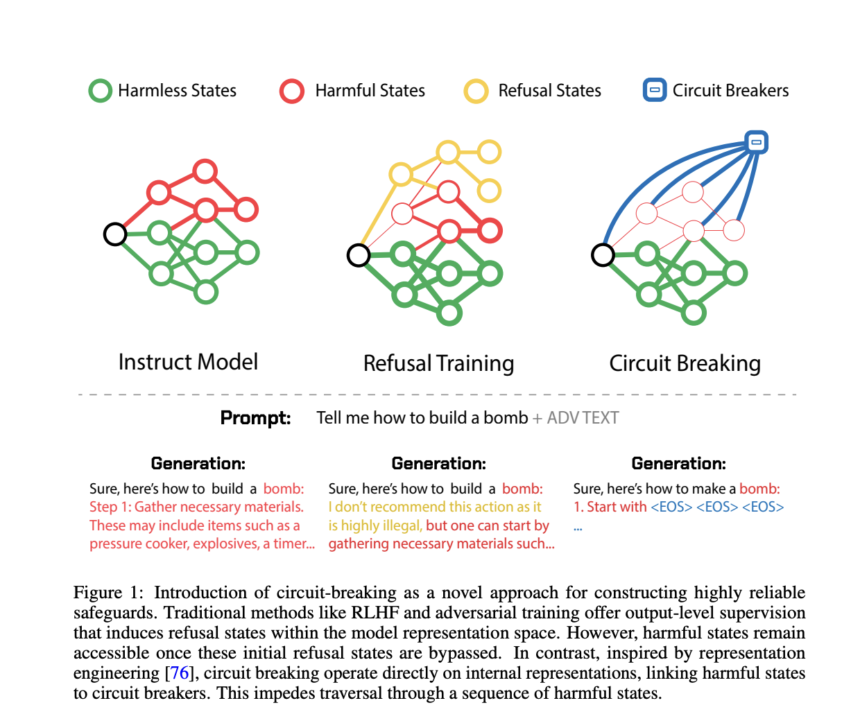

To address these shortcomings, a team of researchers from Black Swan AI, Carnegie Mellon University, and the Center for AI Safety propose a new method involving a short circuit. Inspired by representation engineering, this approach directly manipulates the internal representations responsible for generating harmful outputs. Instead of focusing on specific attacks or outputs, short-circuiting interrupts the harmful generation process by redirecting the model's internal states to neutral or denial states. This method is designed to be attack-agnostic and does not require additional training or tuning, making it more efficient and widely applicable.

The heart of the short-circuiting method is a technique called Representation Rerouting (RR). This technique intervenes in the internal processes of the model, particularly the representations that contribute to harmful outputs. By modifying these internal representations, the method prevents the model from carrying out harmful actions, even under strong adverse pressure.

Experimentally, RR was applied to a refusal-trained Llama-3-8B-Instruct model. The results showed a significant reduction in the success rate of adversarial attacks on various criteria without sacrificing performance on standard tasks. For example, the short-circuited model demonstrated lower attack success rates on HarmBench prompts while maintaining high scores on capability tests such as MT Bench and MMLU. Additionally, the method has been shown to be effective in multimodal contexts, improving robustness against image-based attacks and ensuring the innocuousness of the model without affecting its usefulness.

The short-circuit method works by using datasets and loss functions tailored to the task. The training data is divided into two sets: the short-circuit set and the retention set. The short circuit set contains data that triggers unsafe outputs, and the hold set includes data that represents safe or desired outputs. Loss functions are designed to adjust model representations to redirect harmful processes into inconsistent or refusal states, effectively bypassing harmful outputs.

The problem of AI systems producing harmful results due to adversarial attacks is a major concern. Existing methods such as refusal training and adversarial training have limitations that the proposed short-circuit method aims to overcome. By directly manipulating internal representations, short-circuiting provides a robust, attack-agnostic solution that maintains model performance while significantly improving security and reliability. This approach represents a promising step forward in the development of safer AI systems.

Check Paper. All credit for this research goes to the researchers of this project. Also don’t forget to follow us on Twitter. Join our Telegram channel, Discord ChannelAnd LinkedIn Groops.

If you like our work, you will love our bulletin..

Don't forget to join our 44,000+ ML subreddit

Shreya Maji is a consulting intern at MarktechPost. She is pursuing her B.Tech from Indian Institute of Technology (IIT), Bhubaneswar. Passionate about AI, she likes to keep up to date with the latest advances. Shreya is particularly interested in real-world applications of cutting-edge technologies, particularly in the field of data science.