Federated learning (FL) has become an essential technology in recent years, enabling the training of collaborative models between disparate entities without centralizing data. This approach is particularly beneficial when organizations or individuals need to cooperate on model development without compromising sensitive data.

By keeping data locally and performing model updates locally, FL reduces communication costs and facilitates the integration of heterogeneous data, retaining the unique characteristics of each participant's dataset. However, despite its advantages, FL still presents risks of indirect information leakage, especially during the model aggregation phase.

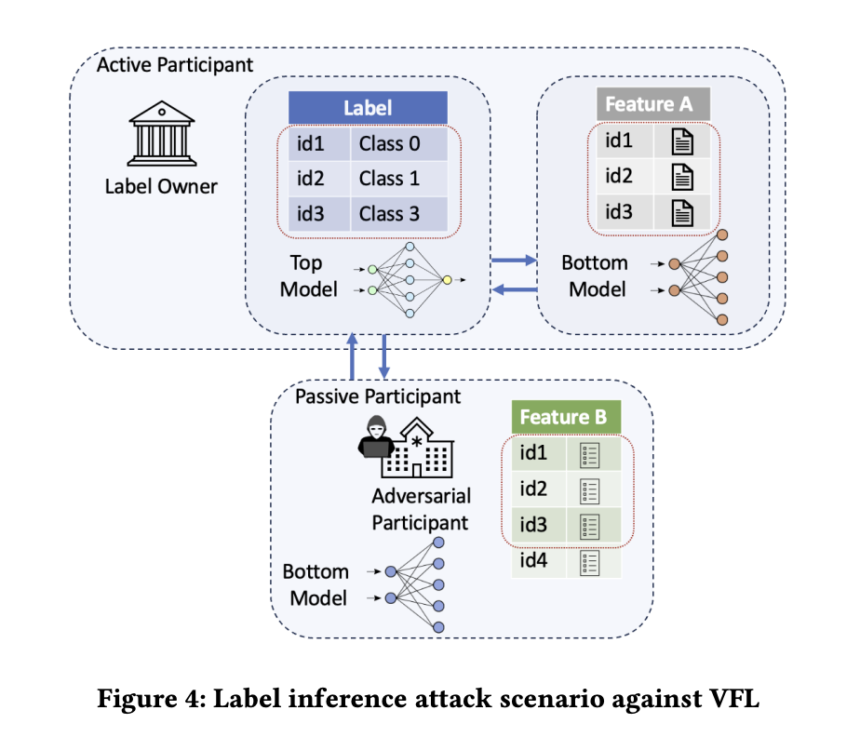

FL encompasses various data partitioning strategies, including horizontal FL (HFL), vertical FL (VFL), and transfer learning. HFL involves parts with the same attribute space but different sample spaces, making it suitable for scenarios where regional branches of the same company aim to create a richer dataset. Conversely, VFL involves non-competing entities with vertically partitioned data sharing overlapping but differing data samples in feature space.

Finally, transfer learning is applicable when there is little overlap in data samples and features among multiple subjects with heterogeneous distributions. Each category presents unique challenges and benefits, with HFL emphasizing independent training, VFL leveraging deeper attribute dimensions for more accurate models, and Transfer Learning addressing scenarios with diverse data distributions.

Despite the lack of raw data sharing in Florida, the combination of information across features and the presence of compromised participants can still lead to a privacy leak. Label inference attacks pose a significant problem in this context because they can exploit the sensitivity of labels to reveal confidential customer information.

To solve this problem, researchers from the University of Pavia are focusing on defending against label inference attacks in the VFL scenario. They consider attacks and propose a defense mechanism called KD𝑘 (Knowledge Discovery and 𝑘-anonymity).

KD𝑘 leverages a knowledge distillation (KD) stage and an obfuscation algorithm to improve privacy protection. KD is a machine learning compression technique that transfers knowledge from a larger teacher model to a smaller student model, producing softer probability distributions instead of strict labels.

In their framework, an active participant includes a network of teachers to generate soft labels, which are then processed using 𝑘-anonymity to add uncertainty. By grouping labels 𝑘 with the highest probabilities, it becomes difficult for attackers to accurately infer the most probable label. The high-end model of the server then uses this partially anonymized data for collaborative VFL tasks.

Experimental results illustrate a notable reduction in the accuracy of label inference attacks in all three types described by Fu et al., confirming the effectiveness of the proposed defense mechanism. The research contributions encompass the development of a robust countermeasure designed to combat label inference attacks, validated through an extensive experimental campaign. Furthermore, the study provides a comprehensive comparison with existing defense strategies, highlighting the superior performance of the proposed approach.

Check Paper. All credit for this research goes to the researchers of this project. Also don’t forget to follow us on Twitter. Join our Telegram channel, Discord ChannelAnd LinkedIn Groops.

If you like our work, you will love our bulletin..

Don't forget to join our 40,000+ ML subreddit

Arshad is an intern at MarktechPost. He is currently pursuing his Int. MSc Physics from Indian Institute of Technology Kharagpur. Understanding things at a fundamental level leads to new discoveries which lead to technological advancements. He is passionate about fundamentally understanding nature using tools such as mathematical models, ML models and AI.