Climate models are a key technology for predicting the impacts of climate change. By running simulations of Earth's climate, scientists and policymakers can estimate conditions such as sea level rise, flooding, and rising temperatures, and make decisions about how to respond to them. appropriately. But current climate models struggle to provide this information quickly or affordably enough to be useful at smaller scales, like the size of a city.

However, the authors of a new open access document published in the Journal of Advances in Earth Systems Modeling have found a method to leverage machine learning to exploit the benefits of current climate models, while reducing the computational costs required to run them.

“This turns conventional wisdom on its head,” says Sai Ravela, a senior research scientist in MIT's Department of Earth, Atmospheric, and Planetary Sciences (EAPS), who wrote the paper with Anamitra Saha, a postdoctoral fellow at EAPS. .

Traditional wisdom

In climate modeling, downscaling is the process of using a global climate model with coarse resolution to generate finer details over smaller regions. Imagine a digital image: a global model is a large image of the world with a low number of pixels. To downscale, you zoom in only on the section of the photo you want to look at, for example Boston. But since the original image was low resolution, the new version is blurry; it doesn't give enough detail to be particularly useful.

“If you go from coarse resolution to fine resolution, you have to add information in one way or another,” says Saha. Downscaling attempts to add this information by filling in the missing pixels. “This addition of information can happen in two ways: either it can come from theory, or it can come from data.”

Conventional downscaling often involves using physics-based models (such as the process of air rising, cooling and condensation, or the landscape of the area) and supplementing them with statistical data drawn from historical observations. But this method is computationally demanding: its execution takes a lot of time and computing power, while being expensive.

a bit of both

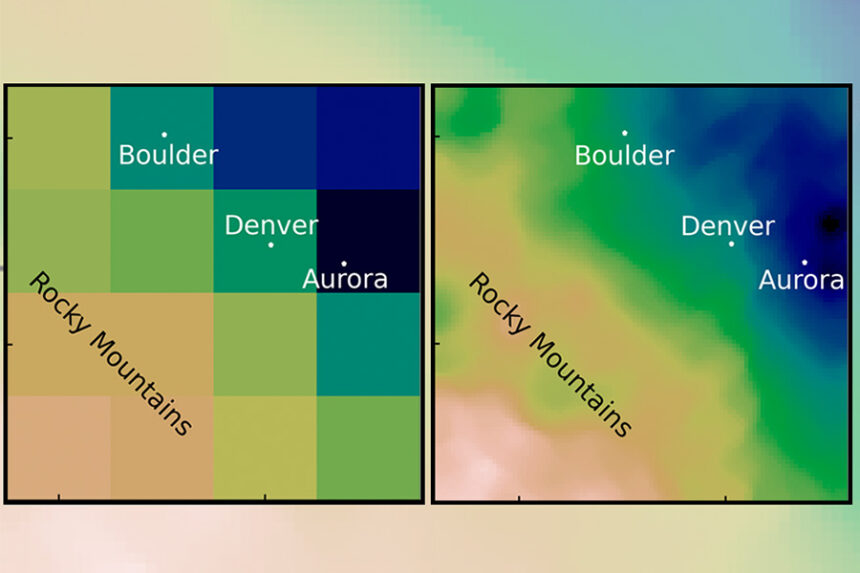

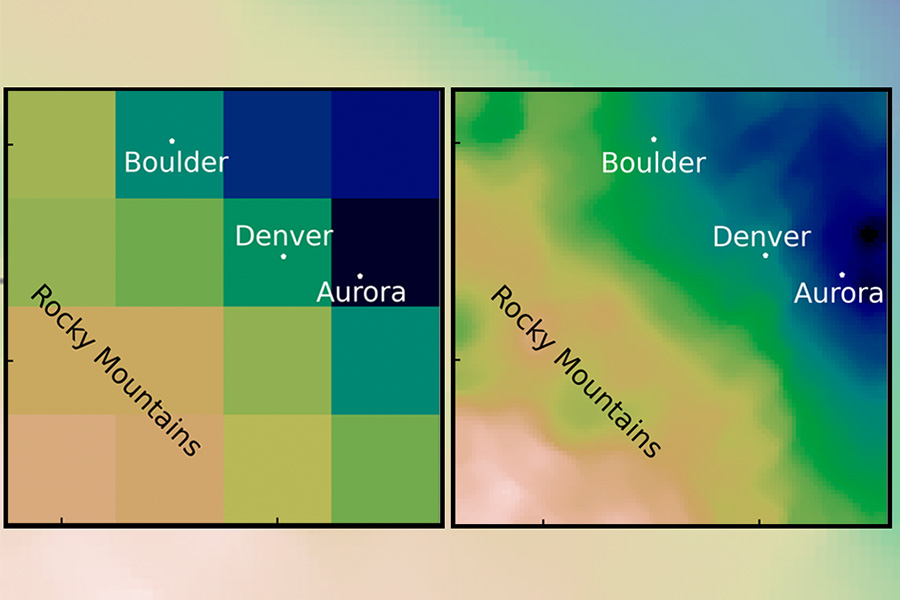

In their new paper, Saha and Ravela found a way to add the data in another way. They used a machine learning technique called adversarial learning. It uses two machines: one generates data to enter our photo. But the other machine judges the sample by comparing it to the real data. If it thinks the image is fake, the first machine must try again until it convinces the second machine. The end goal of the process is to create super-resolution data.

Using machine learning techniques such as adversarial learning is not a new idea in climate modeling; where it currently struggles is its inability to handle large amounts of basic physics, like conservation laws. Researchers discovered that simply simplifying the initial physics and supplementing it with statistics from historical data was enough to generate the results they needed.

“If you augment machine learning with information from statistics and simplified physics, then all of a sudden it’s magic,” says Ravela. He and Saha began by estimating extreme precipitation amounts by removing more complex physical equations and focusing on water vapor and land topography. They then generated general precipitation patterns for the Denver Mountain and Chicago Plain, applying historical counts to correct the results. “It gives us extremes, like physics does, at a much lower cost. And that gives us statistics-like speeds, but with much higher resolution.

Another unexpected benefit of the results was how little training data was required. “The fact that a little physics and a little statistics are enough to improve the performance of the ML (machine learning) model… was actually not obvious from the beginning,” says Saha. Training takes just a few hours and can produce results in minutes, an improvement over the months it takes to run other models.

Quickly quantify risk

Being able to run the models quickly and often is a key requirement for stakeholders such as insurance companies and local policymakers. Ravela gives the example of Bangladesh: By observing the impact of extreme weather events on the country, decisions on what crops to grow or where populations should migrate can be made as quickly as possible taking into account a very wide range of conditions and uncertainties.

“We cannot wait months or years to be able to quantify this risk,” he says. “You have to look into the future and take into account a lot of uncertainties to be able to say what might be a good decision.”

Although the current model only looks at extreme precipitation, training it to look at other critical events, such as tropical storms, winds and temperature, is the next step in the project. With a more robust model, Ravela hopes to apply it to other places like Boston and Puerto Rico as part of a Major Climate Challenges Project.

“We are very excited about both the methodology we have developed and the potential applications it could lead to,” he says.