Research

DeepNash learns to play Stratego from scratch by combining game theory and model-free Deep RL

Gaming artificial intelligence (AI) systems have crossed a new frontier. Stratego, the classic board game, more complex than chess and Go, and more clever than poker, has now been mastered. Published in Sciencewe present DeepNashan AI agent who learned the game from scratch to a human expert level by playing against itself.

DeepNash uses a new approach, based on game theory and model-free deep reinforcement learning. His play style converges towards a Nash equilibrium, meaning his game is very difficult for an opponent to exploit. So difficult, in fact, that DeepNash reached the top three all-time among human experts on the world's largest online Stratego platform, Gravon.

Board games have always been a measure of progress in the field of AI, allowing the study of how humans and machines develop and execute strategies in a controlled environment. Unlike chess and Go, Stratego is a game of imperfect information: players cannot directly observe the identity of their opponent's pieces.

This complexity means that other AI-based Stratego systems have struggled to rise above the amateur level. This also means that a very successful AI technique called “game tree search,” previously used to master many perfect-information games, is not scalable enough for Stratego. For this reason, DeepNash goes well beyond searching the game tree.

The value of mastering Stratego goes beyond gaming. In pursuit of our mission to solve the intelligence problem to advance science and benefit humanity, we must build advanced AI systems capable of operate in complex, real-world situations with limited information about other agents and people. Our paper shows how DeepNash can be applied in situations of uncertainty and successfully balance results to help solve complex problems.

Getting to know Stratego

Stratego is a turn-based capture the flag game. It's a game of bluffing and tactics, information gathering and subtle maneuvering. And it's a zero-sum game, so any gain for one player represents a loss of the same magnitude for their opponent.

Stratego is a challenge for AI, in part because it is a game of imperfect information. Both players begin by arranging their 40 playing pieces in the starting formation of their choice, initially hidden from each other at the start of the game. Since both actors do not have access to the same knowledge, they must balance all possible outcomes when making a decision, which provides a difficult benchmark for studying strategic interactions. Coin types and their classifications are shown below.

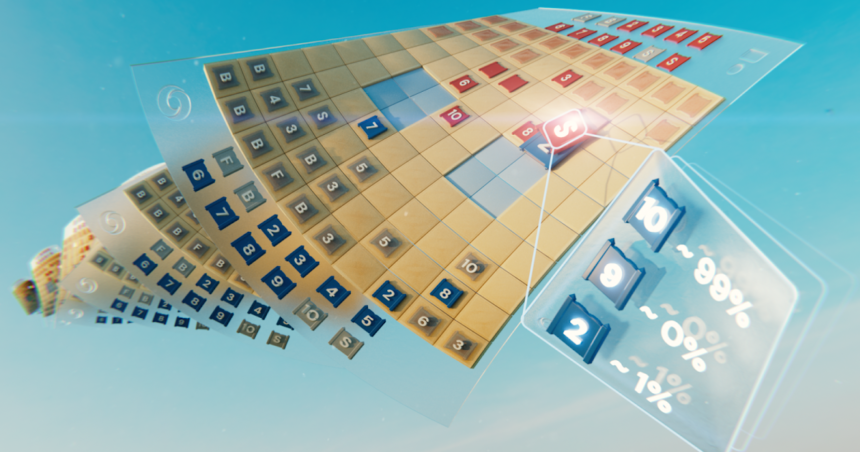

LEFT: Classification of pieces. In battles, higher-ranked pieces win, except that 10 (Marshal) loses when attacked by a spy, and bombs always win except when captured by a miner.

Medium: A possible starting lineup. Notice how the flag is stored safely in the back, flanked by protective bombs. The two pale blue areas are “lakes” and you never enter them.

RIGHT: A play in play, showing Blue's Spy capturing Red's 10.

Information is hard-earned in Stratego. An opposing piece's identity is usually only revealed when it encounters the other player on the battlefield. This contrasts sharply with perfect information games such as chess or Go, in which the location and identity of each piece are known to both players.

The machine learning approaches that work so well on perfect information games, such as DeepMind's AlphaZero, are not easily transferable to Stratego. The need to make decisions with imperfect information and the possibility of bluffing brings Stratego closer to Texas hold'em poker and requires human ability, once noted by the American writer Jack London: “Life is not always a It's about holding good cards, but sometimes I play a bad hand.

The AI techniques that work so well in games like Texas hold'em don't transfer to Stratego, however, due to the length of the game – often hundreds of moves before a player wins. Reasoning in Stratego must be performed over a large number of sequential actions without obvious insight into how each action contributes to the end result.

Finally, the number of possible game states (expressed by the “game tree complexity”) is out of the ordinary compared to chess, Go, and poker, making them incredibly difficult to solve. This is what excited us about Stratego and why it has been a decades-long challenge for the AI community.

The extent of the differences between chess, poker, Go and Stratego.

In search of balance

DeepNash uses a new approach based on a combination of game theory and model-free deep reinforcement learning. “Model-free” means that DeepNash does not attempt to explicitly model its opponent's private game state during the game. Particularly in the early stages of the game, when DeepNash knows little about its opponent's pieces, such modeling would be ineffective or even impossible.

And because the complexity of Stratego's game tree is so vast, DeepNash cannot use a robust approach to AI-driven gaming – Monte Carlo tree search. Tree search has been a key ingredient in many landmark AI achievements for less complex board games and poker.

Instead, DeepNash leverages a new algorithmic idea from game theory that we call Regularized Nash Dynamics (R-NaD). Working on an unprecedented scale, R-NaD steers DeepNash's learning behavior toward what's called a Nash equilibrium (dive into the technical details in our newspaper).

The gaming behavior that results in a Nash equilibrium is unexploitable over time. If a person or machine played perfectly unexploitable Stratego, the worst win rate they could achieve would be 50%, and only if they faced an equally perfect opponent.

In matches against top Stratego bots – including several winners of the Computer Stratego World Championship – DeepNash's win rate exceeded 97%, and was often 100%. Facing the best expert human players on the Gravon gaming platform, DeepNash achieved an 84% win rate, earning it one of the top three rankings of all time.

Expect the unexpected

To achieve these results, DeepNash demonstrated remarkable behaviors both during its initial coin deployment phase and during the gameplay phase. To become difficult to exploit, DeepNash has developed an unpredictable strategy. This means creating initial deployments that are varied enough to prevent your opponent from spotting patterns over a series of games. And during the game phase, DeepNash randomizes between seemingly equivalent actions to avoid exploitable trends.

Stratego players strive to be unpredictable, so it helps to keep information hidden. DeepNash demonstrates how it values information in quite a striking way. In the example below, against a human player, DeepNash (blue) sacrificed, among other pieces, a 7 (Major) and an 8 (Colonel) at the start of the game and was thus able to locate the 10 (Marshal) of the opponent, 9 (General), one 8 and two 7s.

In this early game situation, DeepNash (blue) has already located many of its opponent's most powerful pieces, while keeping its own key pieces secret.

These efforts left DeepNash at a material disadvantage; he lost a 7 and an 8 while his human opponent kept all his pieces ranked 7 and above. However, with solid information about his opponent's top brass, DeepNash rated his chances of victory at 70% – and he won.

The art of bluffing

Like in poker, a good Stratego player must sometimes represent strength, even when weak. DeepNash has learned various bluff tactics. In the example below, DeepNash uses a 2 (a weak Scout, unknown to his opponent) as if it were a high-ranking piece, chasing his opponent's known 8. The human opponent decides that the pursuer is most likely a 10, and so attempts to lure him into an ambush set up by their spy. This DeepNash tactic, risking only a minor piece, succeeds in flushing out and eliminating his opponent's Spy, a critical piece.

The human player (red) is convinced that the unknown piece chasing his 8 must be DeepNash's 10 (note: DeepNash had already lost its only 9).

To learn more, watch these four videos of full games played by DeepNash against (anonymized) human experts: Game 1, Game 2, Game 3, Game 4.

DeepNash's level of play surprised me. I had never heard of an artificial Stratego player getting anywhere near the level needed to win a match against an experienced human player. But after playing against DeepNash myself, I wasn't surprised by the top 3 ranking it subsequently achieved on the Gravon platform. I think he would do very well if he was allowed to compete in the Human World Championships.

Vincent de Boer, co-author of the article and former Stratego world champion

Future directions

While we developed DeepNash for the highly defined world of Stratego, our new R-NaD method can be directly applied to other two-player zero-sum games of perfect or imperfect information. R-NaD has the potential to generalize well beyond two-player game settings to solve large-scale real-world problems, often characterized by imperfect information and astronomical state spaces.

We also hope that R-NaD can help unlock new applications of AI in areas that feature large numbers of human or AI participants with different goals and who might not have information about the intentions of the participants. others or about what is happening in their environment, as in the large-scale optimization of traffic management to reduce driver travel times and associated vehicle emissions.

By creating a generalizable AI system that is robust in the face of uncertainty, we hope to extend AI's problem-solving capabilities in our inherently unpredictable world.

Learn more about DeepNash by reading our article in Science.

For researchers interested in trying R-NaD or working with our proposed new method, we have an open source version our code.