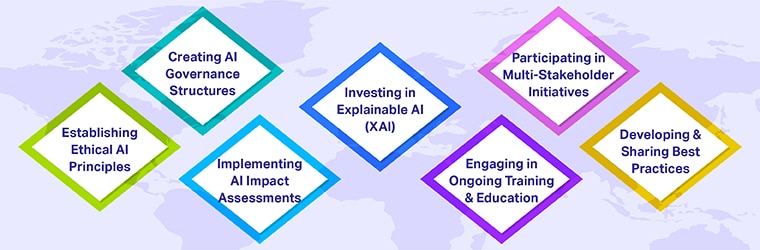

Main measures companies are implementing to comply with changing regulations

Companies are actively taking various steps to adhere to evolving regulations and guidelines regarding artificial intelligence (AI). These efforts aim not only to ensure compliance, but also to foster trust and trustworthiness of AI technologies among users and regulators. Here are some of the main measures implemented by companies:

Establishing ethical principles for AI

Many organizations are developing and publicly sharing their own set of AI ethical principles. These principles often align with global norms and standards, such as fairness, transparency, accountability and respect for user privacy. By establishing these frameworks, companies lay the foundation for the ethical development and use of AI within their operations.

Create AI governance structures

To ensure compliance with internal and external guidelines and regulations, companies are establishing governance structures dedicated to AI oversight. This may include AI ethics committees, oversight committees, and specific roles such as ethics officers who oversee the ethical deployment of AI technologies. These structures help evaluate AI projects in terms of compliance and ethical considerations from the design phase to deployment.

Implementing AI Impact Assessments

Similar to data protection impact assessments under GDPR, AI impact assessments are becoming standard practice. These assessments help identify potential risks and ethical concerns associated with AI applications, including impacts on privacy, security, fairness and transparency. Performing these assessments early and throughout the AI lifecycle allows businesses to proactively mitigate risks.

Investing in Explainable AI (XAI)

Explainability is a key requirement in many AI guidelines and regulations, particularly for high-risk AI applications. Companies are investing in explainable AI technologies that make the decision-making processes of AI systems transparent and understandable to humans. This not only helps with regulatory compliance but also builds trust with users and stakeholders.

Engage in continuing training and education

The rapid evolution of AI technology and its regulatory environment requires continuous learning and adaptation. Companies are investing in ongoing training for their teams to stay up to date with the latest advances in AI, ethical considerations and regulatory requirements. This involves understanding the implications of AI in different sectors and how to resolve ethical dilemmas.

Participate in multi-stakeholder initiatives

Many organizations are joining forces with other businesses, governments, academic institutions and civil society organizations to shape the future of AI regulation. Participating in initiatives such as the Global Partnership on AI (GPAI) or adhering to standards set by the Organization for Economic Cooperation and Development (OECD) allows companies to contribute and stay informed of best practices and emerging regulatory trends.

Develop and share best practices

As companies navigate the complexities of AI regulation and ethical considerations, many are documenting and sharing their experiences and best practices. This includes publishing case studies, contributing to industry guidelines and participating in forums and conferences dedicated to responsible AI.

These steps illustrate a comprehensive approach toward the responsible development and deployment of AI, aligning with global efforts to ensure that AI technologies benefit society while minimizing risks and ethical concerns. As AI continues to advance, approaches to adherence and compliance will likely evolve, requiring continued vigilance and adaptation by businesses.