To design proteins with useful functions, researchers typically start with a natural protein that has a desirable function, such as emitting fluorescent light, and subject it to numerous rounds of random mutations that ultimately generate an optimized version of the protein. protein.

This process produced optimized versions of many important proteins, including green fluorescent protein (GFP). However, for other proteins it has proven difficult to generate an optimized version. MIT researchers have now developed a computational approach that makes it easier to predict which mutations will lead to better proteins, based on a relatively small amount of data.

Using this model, the researchers generated proteins with mutations that should lead to enhanced versions of GFP and an adeno-associated virus (AAV) protein, used to deliver DNA for therapy genic. They hope this can also be used to develop additional tools for neuroscience research and medical applications.

“Protein design is a difficult problem because mapping from DNA sequence to protein structure and function is really complex. There may be a large protein with 10 changes in the sequence, but each change in between may correspond to a completely nonfunctional protein. It's like trying to find your way to the river basin in a mountain range, when there are craggy peaks along the way that block the view. The current work is trying to make the river bed easier to find,” says Ila Fiete, professor of brain and cognitive sciences at MIT, member of the McGovern Institute for Brain Research at MIT, director of the K. Lisa Yang Integrative Computational Neuroscience Center, and one of the lead authors of the study.

Regina Barzilay, Distinguished Professor of AI and Health at MIT's School of Engineering, and Tommi Jaakkola, Thomas Siebel Professor of Electrical Engineering and Computer Science at MIT, are also lead authors of an open access book. paper on work, which will be presented at the International Conference on Representations of Learning in May. MIT graduate students Andrew Kirjner and Jason Yim are lead authors of the study. Other authors include Shahar Bracha, a postdoctoral fellow at MIT, and Raman Samusevich, a graduate student at the Czech Technical University.

Optimize proteins

Many natural proteins have functions that could make them useful for research or medical applications, but they require a little extra engineering to optimize them. In this study, the researchers originally wanted to develop proteins that could be used in living cells as voltage indicators. These proteins, produced by certain bacteria and algae, emit fluorescent light when an electrical potential is detected. If designed for use in mammalian cells, these proteins could allow researchers to measure neuronal activity without using electrodes.

Although decades of research have gone into engineering these proteins to produce a stronger fluorescent signal, in a faster time frame, they have not become efficient enough for widespread use. Bracha, who works in Edward Boyden's lab at the McGovern Institute, contacted Fiete's lab to see if they could work together on a computational approach that could speed up the protein optimization process.

“This work illustrates the human serendipity that characterizes so many scientific discoveries,” says Fiete. “It grew out of the Yang Tan Collective Retreat, a scientific meeting of researchers from multiple MIT centers with distinct missions unified by the common support of K. Lisa Yang. We learned that some of our interests and tools in modeling how the brain learns and optimizes could be applied in the entirely different field of protein design, as is practiced in the Boyden lab.

For any given protein that researchers might want to optimize, there are an almost infinite number of possible sequences that could be generated by swapping different amino acids at each point in the sequence. With so many possible variations, it is impossible to test them all experimentally. So researchers turned to computer modeling to try to predict which ones will work best.

In this study, the researchers set out to overcome these challenges, using GFP data to develop and test a computer model capable of predicting better versions of the protein.

They began by training a type of model known as a convolutional neural network (CNN) on experimental data consisting of GFP sequences and their brightness – the feature they wanted to optimize.

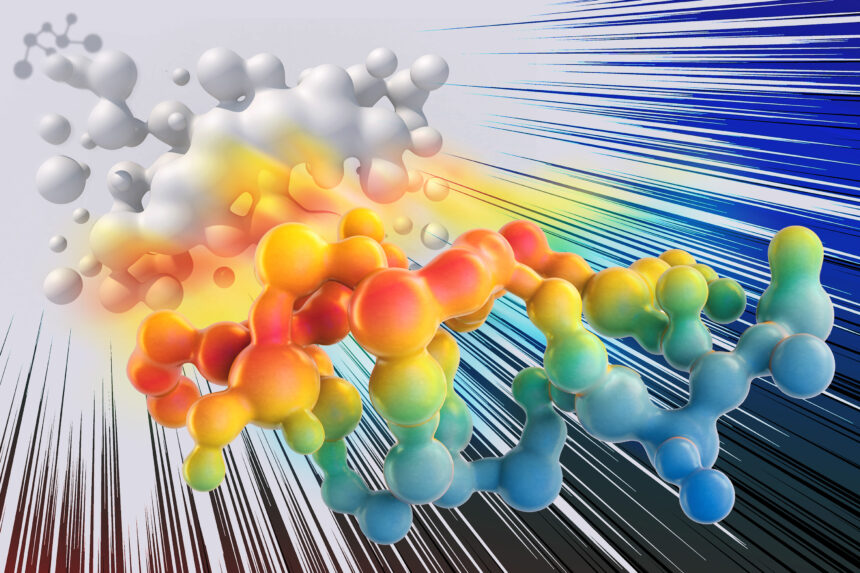

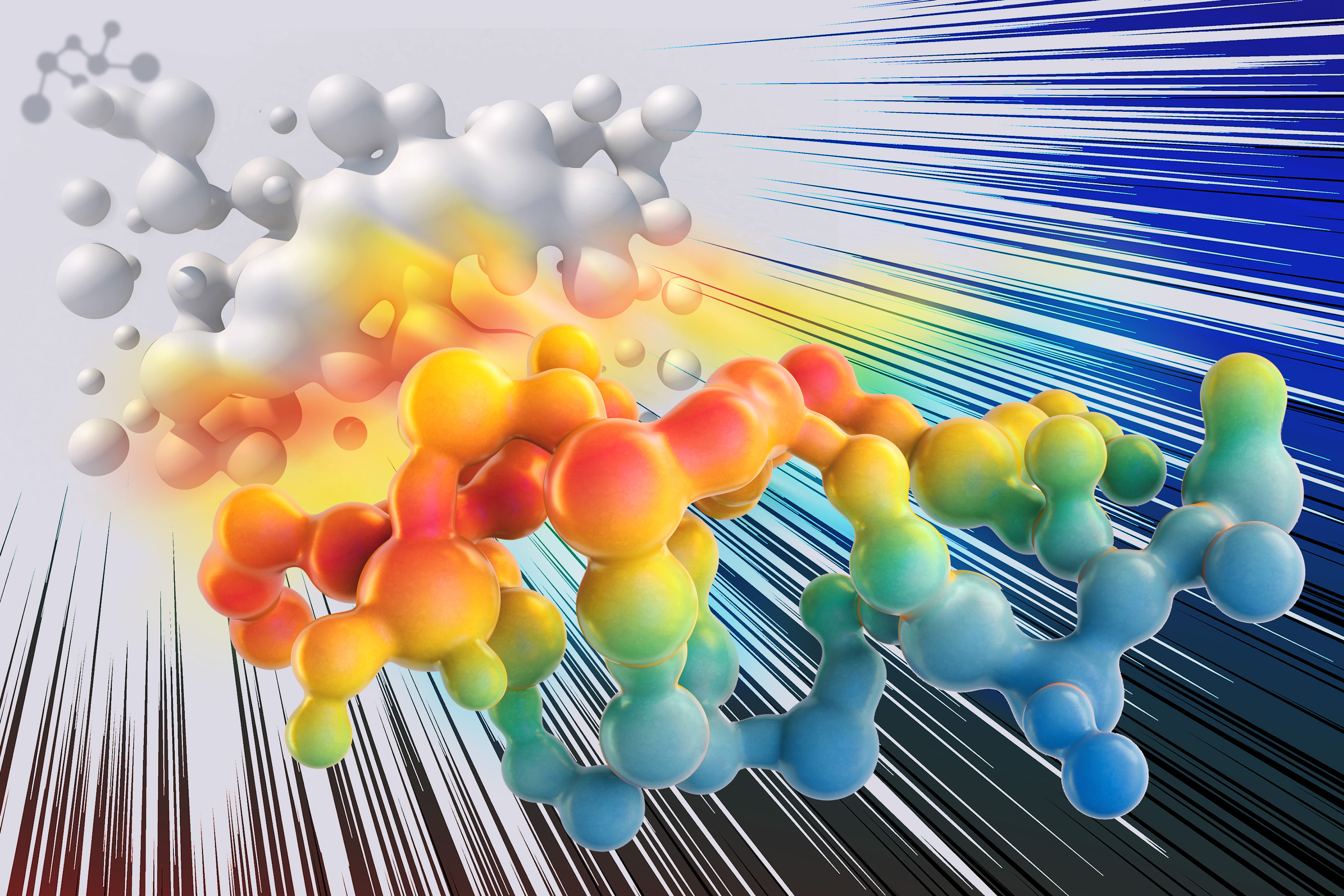

The model was able to create a “fitness landscape” – a three-dimensional map that represents the fitness of a given protein and how it differs from the original sequence – based on a relatively small amount of experimental data (from approximately 1,000 GFP variants).

These landscapes contain peaks that represent fitter proteins and valleys that represent less fit proteins. Predicting the path a protein should take to reach peak fitness can be difficult, because often a protein will have to undergo a mutation that makes it less fit before reaching a peak near better fitness. To overcome this problem, the researchers used an existing computational technique to “smooth” the fitness landscape.

Once these small bumps in the landscape were smoothed out, the researchers retrained the CNN model and found that it was able to achieve higher fitness peaks more easily. The model was able to predict optimized GFP sequences containing up to seven amino acids different from the protein sequence they started with, and the best of these proteins was estimated to be about 2.5 times fitter than the original .

“Once we have this landscape that represents what the model thinks is nearby, we smooth it, and then we retrain the model on the smoother version of the landscape,” Kirjner explains. “There is now a smooth path from your starting point to the top, which the model is now able to achieve by making small improvements iteratively. The same thing is often impossible for unsmoothed landscapes.

Proof of concept

The researchers also showed that this approach worked well for identifying new sequences from the viral capsid of adeno-associated virus (AAV), a viral vector commonly used to deliver DNA. In this case, they optimized the capsid for its ability to hold a DNA payload.

“We used GFP and AAV as a proof of concept to show that it is a method that works on very well characterized data sets and, as such, should be applicable to other data problems. “protein engineering,” Bracha explains. .

The researchers now plan to use this computational technique on data generated by Bracha on voltage indicator proteins.

“Dozens of labs have been working on this for two decades, and there’s still nothing better,” she says. “The hope is that with the generation of a smaller dataset, we can train an in silico model and make predictions that might be better than the last two decades of manual testing.”

The research was funded, in part, by the U.S. National Science Foundation, the Machine Learning for Pharmaceutical Discovery and Synthesis Consortium, the Abdul Latif Jameel Clinic for Machine Learning in Health, the DTRA Discovery of Medical Countermeasures Against Program New and Emerging Threats, the DARPA Accelerated Molecular Discovery Program, the Sanofi Computational Antibody Design Grant, the United States Office of Naval Research, the Howard Hughes Medical Institute, the National Institutes of Health, the K. Lisa Yang ICoN Center and the K. Lisa Yang and Hock E. Tan Center for Molecular Therapeutics at MIT.