Natural language processing (NLP) aims to enable computers to understand and interact using human language. A crucial challenge in NLP is evaluating language models (LMs), which generate responses to various tasks. The diversity of these tasks makes it difficult to effectively assess the quality of responses. With the increasing sophistication of LMs, such as GPT-4, proprietary models often provide strong evaluation capabilities but suffer from transparency, control, and cost issues. This requires the development of reliable open source alternatives that can effectively evaluate linguistic results without compromising these aspects.

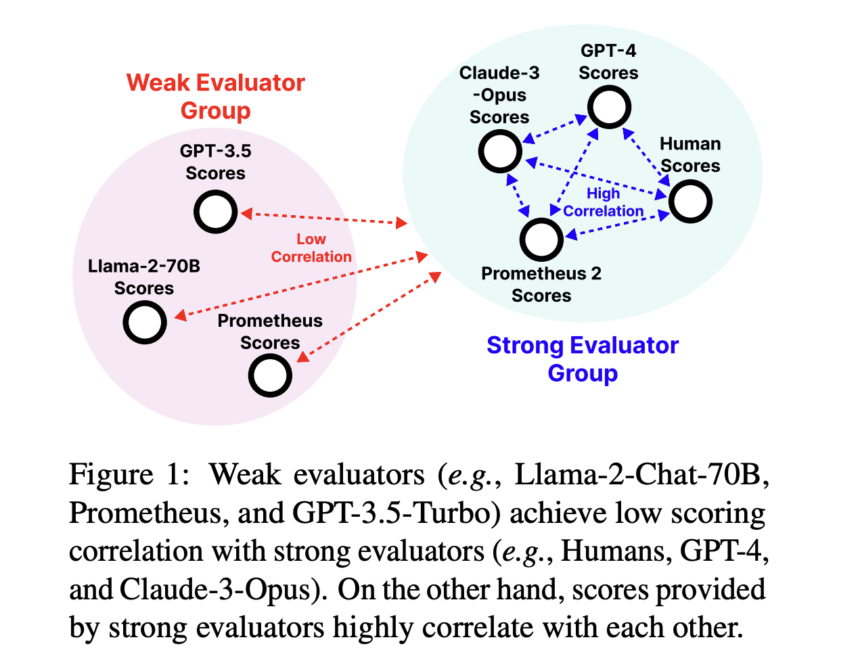

The problem is multifaceted, involving the evaluation of responses and the scalability of evaluation mechanisms. Existing assessment tools, particularly open source models, have several limitations. Many models fail to provide functionality for direct evaluation and pairwise ranking, the two most common forms of evaluation. This limits their adaptability to various real-world scenarios. They prioritize general attributes such as usefulness and safety while assigning scores that deviate significantly from human evaluations. This inconsistency leads to unreliable assessments and requires improved assessment models that accurately reflect human judgments.

Research teams have attempted to fill these gaps using various methods. However, most approaches lack flexibility and fail to accurately simulate human evaluations. Current proprietary models like GPT-4 remain expensive and non-transparent, hindering widespread use of the assessment. The research team from KAIST AI, LG AI Research, Carnegie Mellon University, MIT, Allen Institute for AI and the University of Illinois at Chicago presented Prometheus 2, a new open source evaluator designed to evaluate language models for resolution. This model was developed to provide transparent, scalable and controllable assessments while matching the assessment quality of proprietary models.

Prometheus 2 was developed by merging two evaluator LMs: one trained exclusively for direct evaluation and another for pairwise ranking. Merging these models has created a unified rater that excels in both assessment formats. The researchers used the new Preference Collection dataset, which includes 1,000 evaluation criteria, to further refine the model's capabilities. By effectively combining the two training formats, Prometheus 2 can evaluate LM responses using direct rating and pairwise ranking methods. The fused model leverages a linear fusion approach to combine the strengths of both assessment formats, thereby achieving high performance in assessment tasks.

The model demonstrated the highest correlation with human and proprietary raters in benchmarking tests across four direct assessment benchmarks: Vicuna Bench, MT Bench, FLASK, and Feedback Bench. Pearson correlations exceeded 0.5 on all benchmarks, reaching 0.878 and 0.898 on the Feedback Bench for the 7B and 8x7B models, respectively. Across four pairwise ranking tests, including HHH Alignment, MT Bench Human Judgment, Auto-J Eval, and Preference Bench, Prometheus 2 outperformed existing open source models, achieving accuracy scores exceeding 85%. The Preference Bench, a set of domain tests for Prometheus 2, indicated the robustness and versatility of the model.

Prometheus 2 narrowed the performance gap with proprietary benchmarks, such as GPT-4, across various benchmarks. The model halved the correlation difference between humans and GPT-4 on the FLASK benchmark and achieved 84% accuracy in HHH alignment evaluations. This highlights the significant potential for open source evaluators to replace expensive proprietary solutions while ensuring complete and accurate evaluations.

In conclusion, the lack of evaluators of transparent, scalable and adaptable language models that accurately reflect human judgment constitutes a significant challenge in NLP. Researchers developed Prometheus 2, a new open source evaluator, to address this. They used a linear fusion approach, combining two models trained separately on direct rating and pairwise ranking. This unified model outperformed previous open source models in benchmarking tests, demonstrating high accuracy and correlation while significantly narrowing the performance gap with proprietary models. Prometheus 2 represents a significant step forward in open source evaluation, providing a robust alternative to proprietary solutions.

Check Paper And GitHub. All credit for this research goes to the researchers of this project. Also don’t forget to follow us on Twitter. Join our Telegram channel, Discord ChannelAnd LinkedIn Groops.

If you like our work, you will love our bulletin..

Don't forget to join our 41,000+ ML subreddit

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of artificial intelligence for social good. Its most recent project is the launch of an artificial intelligence media platform, Marktechpost, which stands out for its in-depth coverage of machine learning and deep learning news, both technically sound and easily understandable to a wide audience. The platform has more than 2 million monthly views, illustrating its popularity among the public.