Imagine harnessing the power of advanced language models to understand and respond to your customers' demands. Amazonian base, a fully managed service providing access to such models, makes this possible. Fine-tuning large language models (LLMs) on domain-specific data supercharges tasks like answering product questions or generating relevant content.

In this article, we show how Amazon Bedrock and Amazon SageMaker Canvas, a no-code AI suite, allows business users without deep technical expertise to refine and deploy LLMs. You can transform customer interaction using data sets like product Q&As in just a few clicks using Amazon Bedrock and Amazon SageMaker JumpStart models.

Solution Overview

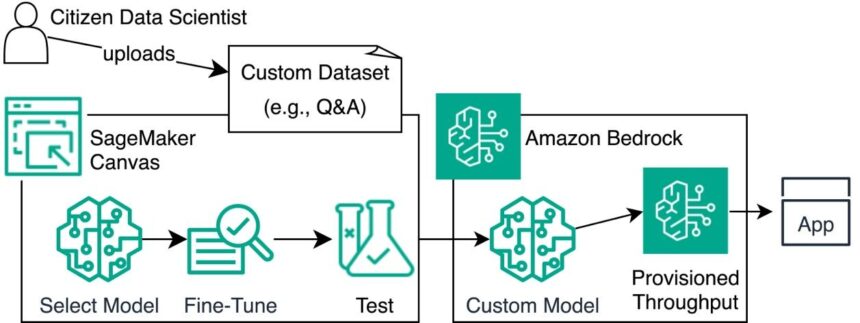

The following diagram illustrates this architecture.

In the following sections, we show you how to refine a model by preparing your dataset, creating a new model, importing the dataset, and selecting a base model. We also show how to analyze and test the model, then deploy the model through Amazon Bedrock.

Preconditions

New users need an AWS account and AWS Identity and Access Management (IAM) with SageMaker, Amazon Bedrock and Amazon Simple Storage Service (Amazon S3).

To follow this article, complete the prerequisite steps to create a domain and enable access to Amazon Bedrock templates:

- Create a SageMaker domain.

- On the domain details page, view user profiles.

- Choose Launch by your profile, and choose Cloth.

- Confirm that your SageMaker IAM role and domain roles have the necessary permissions And relationships of trust.

- On the Amazon Bedrock console, choose Access to the model in the navigation pane.

- Choose Manage model access.

- Select Amazon to activate the Amazon Titan template.

Prepare your dataset

Complete the following steps to prepare your dataset:

- Download the following CSV dataset of question-answer pairs.

- Confirm that your dataset has no formatting issues.

- Copy the data to a new sheet and delete the original.

Create a new template

SageMaker Canvas allows you to refine multiple models simultaneously, allowing you to compare and choose the best one in a ranking after fine tuning. However, this article focuses on the Amazon Titan Text G1-Express LLM. Complete the following steps to create your template:

- In the SageMaker canvas, choose My models in the navigation pane.

- Choose New model.

- For Model Nameenter a name (for example,

MyModel). - For Type of problemselect Refine the basic model.

- Choose Create.

The next step is to import your dataset into SageMaker Canvas:

- Create a dataset named QA-Pairs.

- Upload the prepared CSV file or select it from an S3 bucket.

- Choose the dataset, then choose Select a dataset.

Select a foundation model

After downloading your dataset, select a base model and refine it with your dataset. Perform the following steps:

- On the Refine tab, on the Select basic models menu¸ select Titan Express.

- For Select the input columnchoose question.

- For Select output columnchoose answer.

- Choose Refine.

Wait 2-5 hours for SageMaker to finish polishing your models.

Analyze the model

Once fine-tuning is complete, you can view statistics for your new model, including:

- Training loss – The penalty for each error in predicting the next word during training. Lower values indicate better performance.

- Perplexity of training – A measure of the model's surprise when encountering text during training. Lower perplexity suggests higher confidence in the model.

- Validation loss and validation perplexity – Similar to training metrics, but measured during the validation phase.

To get a detailed report on how your custom model performed across various dimensions, such as toxicity and accuracy, choose Generate an assessment report. Then select Download the report.

Canvas offers a Python Jupyter notebook detailing your fine-tuning work, alleviating concerns about vendor lock-in associated with no-code tools and allowing details to be shared with data science teams for further validation and deployment.

If you selected multiple foundation models to create custom models from your dataset, see Model classification to compare them on dimensions like loss and perplexity.

Test the models

You now have access to custom templates that can be tested in SageMaker Canvas. Complete the following steps to test the models:

- Choose Testing on ready-made models and wait 15-30 minutes for your test endpoint to be deployed.

This testing endpoint will only remain active for 2 hours to avoid unforeseen costs.

Once deployment is complete, you will be redirected to the SageMaker Canvas playground, with your pre-selected template.

- Choose Compare and select the foundation pattern used for your custom pattern.

- Enter a sentence directly from your training dataset, to ensure that the custom model at least answers such a question better.

For this example, we enter the question, “Who developed the lie-detecting algorithm Fraudoscope?”

The refined model answered correctly:

“The lie-detecting algorithm Fraudoscope was developed by Tselina Data Lab.”

Amazon Titan responded incorrectly and verbosely. However, it must be recognized that the model has raised important ethical concerns and limitations of facial recognition technologies in general:

Let's ask a question about an NVIDIA chip, which powers Amazon Elastic Compute Cloud (Amazon EC2) P4d instances: “How much memory in an A100?”

Again, the custom template not only gets a more correct answer, but it also answers with the brevity you'd expect from a question-and-answer bot:

“An A100 GPU provides up to 40 GB of high-speed HBM2 memory.”

Amazon Titan's answer is incorrect:

Deploy the model via Amazon Bedrock

For production use, especially if you plan to provide access to tens or even thousands of employees by integrating the model into an application, you can deploy the models as API endpoints. Complete the following steps to deploy your template:

- On the Amazon Bedrock console, choose Foundation models in the navigation pane, then choose Custom models.

- Locate the model with the prefix Canvas- with Amazon Titan as the source.

Alternatively, you can use the AWS CLI (AWS CLI): aws bedrock list-custom-models

- Take note of the

modelArnthat you will use in the next step, and themodelNameor save them directly as variables:

To start using your model, you must provision throughput.

- On the Amazon Bedrock console, choose Buy provisioned throughput.

- Name it, define 1 model unit, with no commitment duration.

- Confirm the purchase.

You can also use the AWS CLI:

Or, if you saved the values as variables in the previous step, use the following code:

After approximately five minutes, the model status changes from Creation has In service.

If you are using the AWS CLI, you can see the status via aws bedrock list-provisioned-model-throughputs.

Use the template

You can access your refined LLM through the Amazon Bedrock Console, API, CLI, or SDKs.

In the Chat Playgroundchoose the refined model category, select your Canvas prefixed model and provisioned throughput.

Enrich your existing Software as a Service (SaaS), software platforms, web portals or mobile applications with your fine-tuned LLM using API or SDKs. These allow you to send prompts to the Amazon Bedrock endpoint using your preferred programming language.

The answer demonstrates the model's personalized ability to answer these types of questions:

“The lie-detecting algorithm Fraudoscope was developed by Tselina Data Lab.”

This improves Amazon Titan's response before fine-tuning:

“Marston Morse developed the lie-detecting algorithm Fraudoscope.”

For a complete example of calling models on Amazon Bedrock, see the following GitHub repository. This repository provides a ready-to-use code base that allows you to experiment with different LLMs and deploy a versatile chatbot architecture within your AWS account. Now you have the skills to use it with your custom template.

Another reference that might spark your imagination is Amazonian bedrock sampleswhich can help you get started with a number of other use cases.

Conclusion

In this article, we showed you how to refine an LLM to better meet your business needs, deploy your custom model as an Amazon Bedrock API endpoint, and use that endpoint in application code. This helped unlock the power of the custom language model for a broader set of people within your company.

Although we used examples based on sample data, this article has presented the capabilities of these tools and their potential applications in real-world scenarios. The process is simple and applicable to various data sets, such as your organization's FAQs, as long as they are in CSV format.

Take what you've learned and start thinking about ways to use custom AI models in your organization. For more inspiration, see Overcome common contact center challenges with generative AI and Amazon SageMaker Canvas And AWS re:Invent 2023 – New LLM Features in Amazon SageMaker Canvas, with Bain & Company (AIM363).

about the authors

![]() Yann Stoneman is a Solutions Architect at AWS focused on machine learning and serverless application development. With a background in software engineering and a blend of art and technology education at Juilliard and Columbia, Yann brings a creative approach to AI challenges. He actively shares his expertise through his YouTube channel, blog posts and presentations.

Yann Stoneman is a Solutions Architect at AWS focused on machine learning and serverless application development. With a background in software engineering and a blend of art and technology education at Juilliard and Columbia, Yann brings a creative approach to AI challenges. He actively shares his expertise through his YouTube channel, blog posts and presentations.

Davide Gallitelli is a specialist solutions architect for AI/ML in EMEA. He is based in Brussels and works closely with clients throughout the Benelux. He has been a developer since a young age, starting coding at the age of 7. He started learning AI/ML during his later years of college and has since fallen in love with it.

Davide Gallitelli is a specialist solutions architect for AI/ML in EMEA. He is based in Brussels and works closely with clients throughout the Benelux. He has been a developer since a young age, starting coding at the age of 7. He started learning AI/ML during his later years of college and has since fallen in love with it.