Research in computational linguistics continues to explore how large linguistic models (LLMs) can be adapted to incorporate new knowledge without compromising the integrity of existing information. A major challenge is ensuring that these models, fundamental to various language processing applications, maintain their accuracy even as they expand their knowledge bases.

A conventional approach involves supervised fine-tuning, in which LLMs are progressively trained on data that matches or extends beyond their pre-training. Although popular, this method has had mixed results. The fine-tuning process involves presenting the model with examples that it may or may not partially recognize, prompting it to adjust its responses accordingly. The effectiveness of these methods is typically assessed by how well these models maintain their performance when presented with data that aligns with or extends their existing knowledge base.

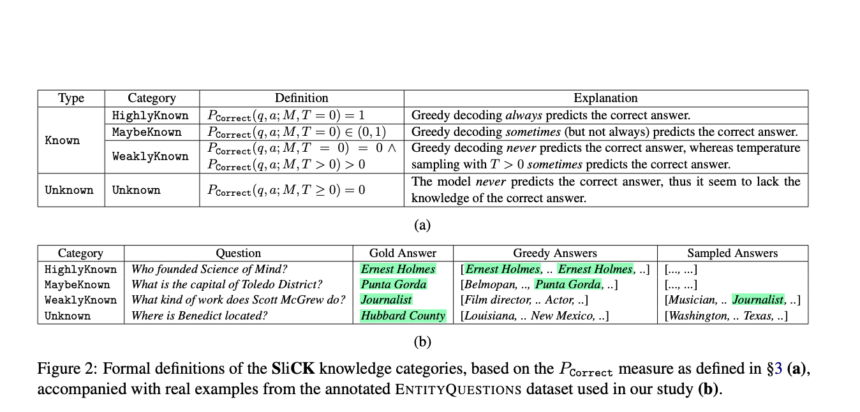

A research team from Technion – Israel Institute of Technology and Google Research has introduced SliCK, a new framework specifically designed to examine the integration of new knowledge into LLMs. This methodology is distinguished by categorizing knowledge into distinct levels, ranging from Very Known to Unknown, providing granular analysis of how different types of information affect model performance. This setup allows for an accurate assessment of the model's ability to assimilate new facts while maintaining the accuracy of its existing knowledge base, highlighting the delicate balance required in model training.

In methodology, the study leverages the PaLM model, a robust LLM developed by Google, which has been refined using carefully designed datasets to include varying proportions of knowledge categories: HighlyKnown, MaybeKnown, WeaklyKnown and Unknown. These datasets are derived from a subset of factual questions mapped from Wikidata relationships, allowing controlled examination of the model's learning dynamics. The experiment meticulously quantifies the model's performance in these categories using exact match (EM) metrics to assess how effectively the model integrates new information while avoiding the pitfalls of hallucinations. This structured approach provides a clear view of the impact of fine-tuning with familiar and new data on model accuracy.

The study results demonstrate the effectiveness of SliCK categorization in improving the fine-tuning process. Models trained using this structured approach, particularly with a mix of 50% known and 50% unknown data, showed optimized balance, achieving 5% higher accuracy in generating correct answers compared to models trained with mostly unknown data. Conversely, when the proportion of unknown data exceeded 70%, the models' propensity for hallucinations increased by approximately 12%. These results highlight the critical role of SliCK in quantitatively assessing and managing error risk as new information is integrated during fine-tuning of LLMs.

To summarize, research conducted by Technion – Israel Institute of Technology and Google Research takes an in-depth look at fine-tuning LLMs using the SliCK framework to manage the integration of new knowledge. The study highlights the delicate balance required in model training, with the PaLM model demonstrating improved accuracy and reduced hallucinations when trained under controlled knowledge conditions. These results highlight the importance of strategic data categorization to improve model reliability and performance, providing valuable insights for future developments in machine learning methodologies.

Check Paper. All credit for this research goes to the researchers of this project. Also don’t forget to follow us on Twitter. Join our Telegram channel, Discord ChannelAnd LinkedIn Groops.

If you like our work, you will love our bulletin..

Don't forget to join our 42,000+ ML subreddit

Nikhil is an intern consultant at Marktechpost. He is pursuing an integrated dual degree in materials from the Indian Institute of Technology, Kharagpur. Nikhil is an AI/ML enthusiast who is always looking for applications in areas such as biomaterials and biomedical science. With a strong background in materials science, he explores new advances and creates opportunities for contribution.