Understanding and mitigating hallucinations in vision language models (VLVM) is an emerging area of research that addresses the generation of consistent but factually incorrect responses by these advanced AI systems. As VLVMs integrate more and more textual and visual input to generate responses, the accuracy of these results becomes crucial, especially in contexts where accuracy is paramount, such as medical diagnostics or autonomous driving.

Hallucinations in VLVM typically manifest as plausible but incorrect details generated in an image. These inaccuracies present significant risks that can distort decisions in critical applications. The challenge lies in detecting these errors and developing methods to mitigate them effectively, thereby ensuring the reliability of VLVM outputs.

Most existing benchmarks for assessing hallucinations in VLVM focus on responses to constrained query formats, such as yes/no questions about specific objects or attributes in an image. These criteria often fail to measure the more complex and overt hallucinations that can occur in various real-world applications. As a result, there is a significant gap in the ability to fully understand and mitigate the broader spectrum of hallucinations that VLVMs can produce.

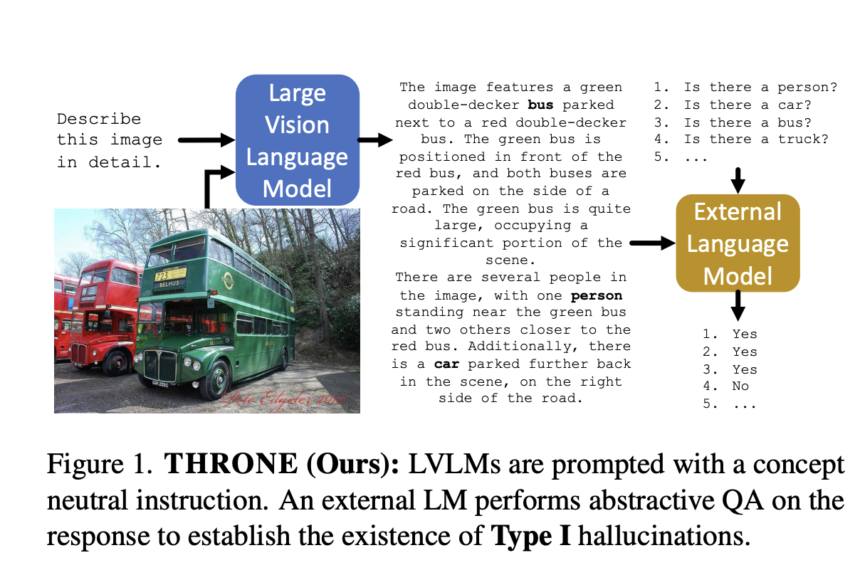

Researchers at the University of Oxford, AWS AI Labs, introduced a new framework called THRONE (Recognition of text hallucinations from images with object probes for open assessment) to fill this gap. THRONE is designed to assess Type I hallucinations, those that occur in response to open-ended prompts requiring detailed image descriptions. Unlike previous methods, THRONE uses publicly available language models to assess hallucinations in free-form responses generated by various VLVMs, providing a more comprehensive and rigorous approach.

THRONE leverages multiple metrics to quantitatively measure hallucinations across different VLVMs. For example, it uses precision and recall metrics as well as an F0.5 score per class, emphasizing precision twice as much as recall. This rating is particularly relevant in scenarios where false positives, i.e. incorrect but plausible answers, are more detrimental than false negatives.

An evaluation of the effectiveness of THRONE revealed relevant data on the prevalence and characteristics of hallucinations in current VLVM. Despite the framework's advanced approach, results indicate that many VLVMs still struggle with a high rate of hallucinations. For example, the framework detected that some of the evaluated models produce responses, with approximately 20% of the objects mentioned being hallucinations. This high rate of inaccuracies highlights the ongoing challenge of reducing hallucinations and improving the reliability of VLVM output.

In conclusion, the THRONE framework represents a significant advance in the assessment of hallucinations in vision language models, particularly addressing the complex issue of Type I hallucinations in free-form responses. While existing benchmarks have struggled to effectively measure these more nuanced errors, THRONE uses a new combination of publicly available language models and a robust metric system, including precision, recall, and F0.5 scores per class. Despite this progress, the high rate of detected hallucinations, approximately 20% in some models, highlights current challenges and the need for further research to improve the accuracy and reliability of VLVMs in practical applications.

Check Paper. All credit for this research goes to the researchers of this project. Also don’t forget to follow us on Twitter. Join our Telegram channel, Discord ChannelAnd LinkedIn Groops.

If you like our work, you will love our bulletin..

Don't forget to join our 42,000+ ML subreddit

Sana Hassan, Consulting Intern at Marktechpost and a dual degree student at IIT Madras, is passionate about applying technology and AI to address real-world challenges. With a keen interest in solving practical problems, he brings a fresh perspective to the intersection of AI and real-world solutions.