Unlocking the potential of large multimodal language models (MLLMs) to handle diverse modalities such as speech, text, image and video is a crucial step in the development of AI. This capability is essential for applications such as natural language understanding, content recommendation, and multimodal information retrieval, thereby improving the accuracy and robustness of AI systems.

Traditional methods for handling multimodal challenges often rely on dense models or single-expert modal approaches. Dense models involve all parameters in each calculation, leading to increased computational load and reduced scalability as the model size increases. On the other hand, single-expert approaches lack the flexibility and adaptability needed to effectively integrate and understand diverse multimodal data. These methods often struggle to perform complex tasks that involve multiple modalities simultaneously, such as understanding long segments of speech or processing complex image-text combinations.

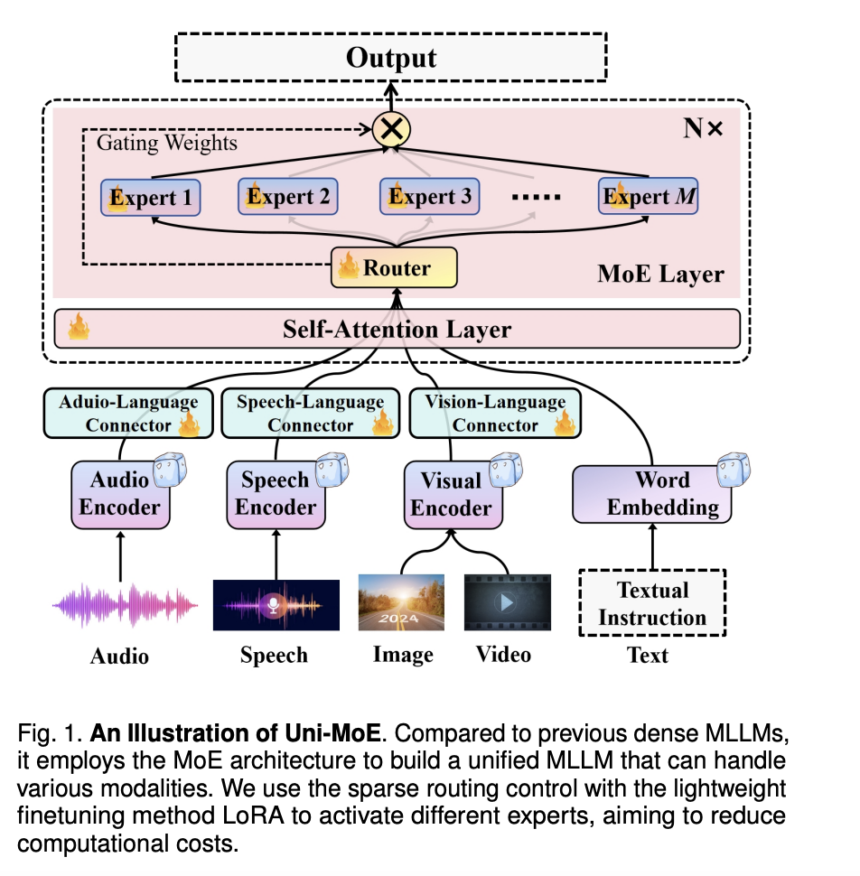

Harbin Institute of Technology researchers proposed the innovative Uni-MoE approach, which leverages a blended expert (MoE) architecture along with a three-phase strategic training strategy. Uni-MoE optimizes expert selection and collaboration, enabling modality-specific experts to work synergistically to improve model performance. The three-phase training strategy includes specialized training phases for multimodal data, which improves model stability, robustness, and adaptability. This new approach not only overcomes the drawbacks of dense models and single-expert approaches, but also demonstrates significant advances in the capabilities of multimodal AI systems, especially in handling complex tasks involving various modalities.

Uni-MoE's technical advancements include a specialized MoE framework across different modalities and a three-phase training strategy for optimized collaboration. Advanced routing mechanisms allocate input data to relevant experts, thereby optimizing computing resources, while auxiliary balancing loss techniques ensure equal importance of experts during training. These subtleties make Uni-MoE a robust solution for complex multimodal tasks.

The results highlight the superiority of Uni-MoE with accuracy scores ranging from 62.76% to 66.46% on evaluation criteria such as ActivityNet-QA, RACE-Audio and A-OKVQA. It outperforms dense models, exhibits better generalization, and efficiently handles time-consuming speech understanding tasks. The success of Uni-MoE marks a significant step forward in multimodal learning, promising improved performance, efficiency and generalization for future AI systems.

In conclusion, Uni-MoE represents a significant step forward in the field of multimodal learning and AI systems. Its innovative approach, leveraging a blended expert (MoE) architecture and a three-phase strategic training strategy, addresses the limitations of traditional methods and unlocks improved performance, efficiency and generalizability across diverse modalities. The impressive accuracy scores achieved across various benchmarks, including ActivityNet-QA, RACE-Audio, and A-OKVQA, highlight Uni-MoE's superiority in handling complex tasks such as understanding long speech. This revolutionary technology not only overcomes existing challenges but also paves the way for future advancements in multi-modal AI systems, reaffirming its pivotal role in shaping the future of AI technology.

Check Paper. All credit for this research goes to the researchers of this project. Also don’t forget to follow us on Twitter. Join our Telegram channel, Discord ChannelAnd LinkedIn Groops.

If you like our work, you will love our bulletin..

Don't forget to join our 42,000+ ML subreddit