In the field of deep learning, particularly in NLP, image analysis, and biology, there is an increasing emphasis on developing models that provide both computational efficiency and robust expressiveness. Attention mechanisms have been revolutionary, allowing better management of sequence modeling tasks. However, the computational complexity associated with these mechanisms scales quadratically with sequence length, which becomes a significant bottleneck when handling long-context tasks such as genomics and natural language processing. The ever-increasing need to process larger and more complex data sets has pushed researchers to find more efficient and scalable solutions.

A major challenge in this area is to reduce the computational load of attention mechanisms while preserving their expressiveness. Many approaches have attempted to solve this problem by streamlining attention matrices or employing low-rank approximations. Techniques such as Reformer, Routing Transformer, and Linform have been developed to improve the computational efficiency of attention mechanisms. Yet these techniques struggle to perfectly balance computational complexity and expressive power. Some models use combinations of these techniques with dense attention layers to improve expressiveness while maintaining computational feasibility.

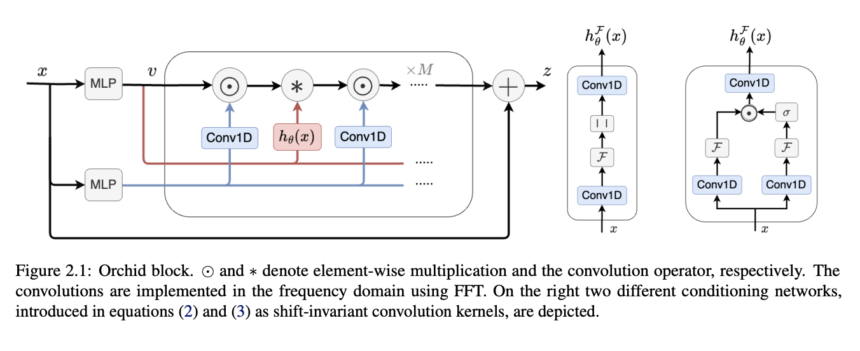

A new architectural innovation known as Orchid was born from research conducted at the University of Waterloo. This innovative sequence modeling architecture incorporates a data-dependent convolution mechanism to overcome the limitations of traditional attention-based models. Orchid is designed to address the challenges inherent in sequence modeling, particularly quadratic complexity. By leveraging a new data-dependent convolution layer, Orchid dynamically adjusts its kernel based on input data using a conditioning neural network, allowing it to efficiently handle sequence lengths of up to 131 KB This dynamic convolution guarantees efficient filtering of long sequences, thus enabling scalability with near-linear complexity.

The heart of Orchid lies in its new data-dependent convolution layer. This layer adapts its core using a conditioning neural network, significantly improving Orchid's ability to filter long sequences efficiently. The conditioning network ensures that the kernel adapts to the input data, strengthening the model's ability to capture long-term dependencies while maintaining computational efficiency. By incorporating gate operations, the architecture enables high expressiveness and near-linear scalability with a complexity of O(LlogL). This allows Orchid to handle sequence lengths well beyond the limits of dense attention layers, demonstrating superior performance in sequence modeling tasks.

The model outperforms traditional attention-based models, such as BERT and Vision Transformers, in domains with smaller model sizes. During the associative recall task, Orchid consistently achieved accuracy rates above 99%, with sequences up to 131 KB. Compared to the BERT base, the Orchid-BERT base has 30% fewer parameters while achieving a 1.0 point improvement in GLUE score. Similarly, Orchid-BERT-large outperforms BERT-large in terms of GLUE performance while reducing the number of parameters by 25%. These performance tests highlight Orchid's potential as a versatile model for increasingly large and complex datasets.

In conclusion, Orchid successfully addresses the computational complexity limitations of traditional attention mechanisms, providing a transformative approach to sequence modeling in deep learning. Using a data-dependent convolution layer, Orchid efficiently adjusts its kernel based on input data, achieving near-linear scalability while maintaining high expressiveness. Orchid sets a new benchmark in sequence modeling, enabling more efficient deep learning models to process ever-larger datasets.

Check Paper. All credit for this research goes to the researchers of this project. Also don’t forget to follow us on Twitter. Join our Telegram channel, Discord ChannelAnd LinkedIn Groops.

If you like our work, you will love our bulletin..

Don't forget to join our 41,000+ ML subreddit

Nikhil is an intern consultant at Marktechpost. He is pursuing an integrated dual degree in materials from the Indian Institute of Technology, Kharagpur. Nikhil is an AI/ML enthusiast who is always looking for applications in areas such as biomaterials and biomedical science. With a strong background in materials science, he explores new advances and creates opportunities for contribution.