Text-to-image conversion models did not exist until the advancement of deep neural networks in the mid-2010s. However, generative AI was a hot topic long before ChatGPT. OpenAI's DALL-E, Google Brain's Imagen, and StabilityAI's Stable Diffusion are text-to-image conversion models that have gained attention due to their ability to create images that resemble real photographs and works of art. hand drawn art. These generative AI models have been a game changer in the field of AI.

The 9 Best Open Source Text to Image Templates

So let's take a look at the 9 best open source image generation templates that can help you.

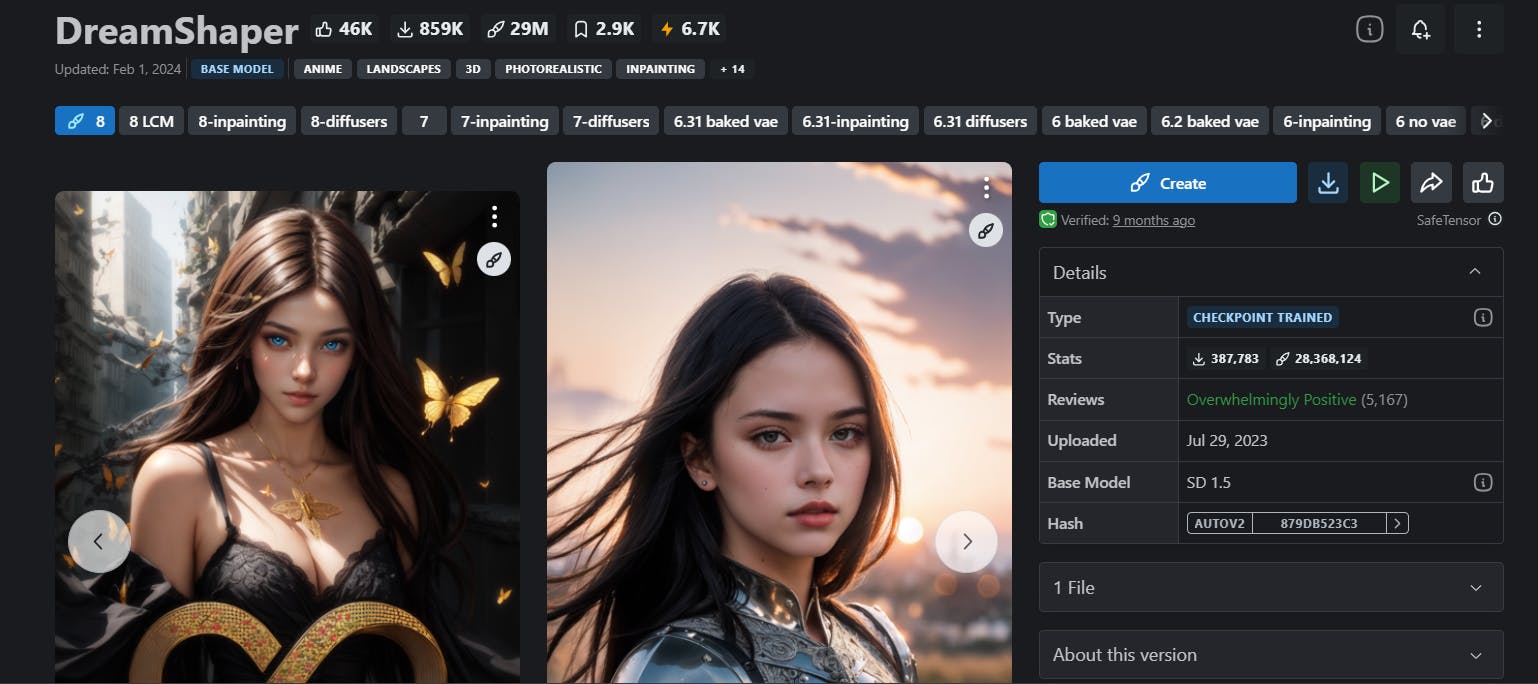

1. Dream shaper

Dream Shaper V7, the popular image generation model based on a diffusion architecture, introduces significant improvements in LoRA support and overall realism. It further improves features from version 6, including increased LoRA support, better styling enhancements, and improved generation at 1024px height (although caution is advised when using this feature ).

This template provides photorealistic images with noise shifting and amplifies anime style generation with booru tags. It also offers a resolution upgrade for better eye performance, fixing issues in earlier versions. The impact of the 3.32 “clip fix” may vary from 3.31, and is highly recommended for mixing purposes. Plus, it involves painting and painting, which adds to its versatility.

If you want to know more, check out This out.

2. Dreamlike photorealistic

Dreamlike Photoreal 2.0 is the ultimate photorealistic template designed by DreamlikeArt. It is based on Stable Diffusion 1.5 and offers the best solution to improve the realism of your generated images by allowing you to embed photos in your prompt. For optimal results, it is recommended to use non-square proportions, with vertical proportions being ideal for portrait-style photos and horizontal proportions for landscape photos. This powerful model was trained on images with dimensions 768 × 768 pixels, but it can easily handle higher resolutions such as 768 x 1024 px or 1024 x 768 px.

Running on server-grade A100 GPUs, it outperforms 8x RTX 3090 GPUs with an average build speed of just 4 seconds. With the ability to process up to 30 images simultaneously and generate up to 4 images simultaneously, this model ensures an efficient and seamless workflow. It includes several features like scaling, natural language editing, face enhancements, pose, depth, sketch replication and many more that make it the ultimate choice for all your photorealistic needs.

You can access it here.

3. Waifu Stream

Waifu Diffusion is the ultimate solution for generating high-quality, realistic anime-style images. This is the refined version (1.3) of the Stable Diffusion model, derived from Stable Diffusion v1.4. The model has a proven track record of producing an impressive variety of widely recognized images. The training dataset included 680,000 text and image samples obtained from a booru site, making it the go-to model for generating anime-style images.

Find their GitHub repository here.

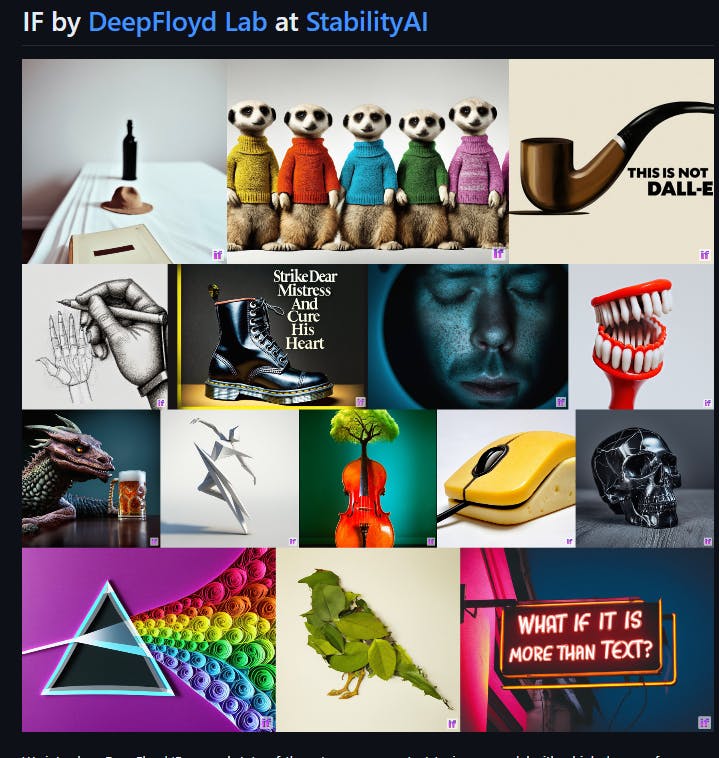

4. DeepFloyd SI

DeepFloyd IF is the ultimate solution for generating realistic visuals and language understanding. This open source text-to-image template features a modular design that includes a fixed text encoder and three interconnected pixel delivery modules. With the ability to generate images of increasing resolution, ranging from 64×64 px to 1024×1024 px, this model is truly cutting edge.

It uses a frozen text encoder derived from the T5 transformer to extract text embeddings which are then used in a UNet architecture enhanced by cross-attention and attention clustering. With its impressive zero-shot FID score of 6.66 on the COCO dataset, DeepFloyd IF outperforms all existing models and is undoubtedly the best option available.

Check out their GitHub repository here.

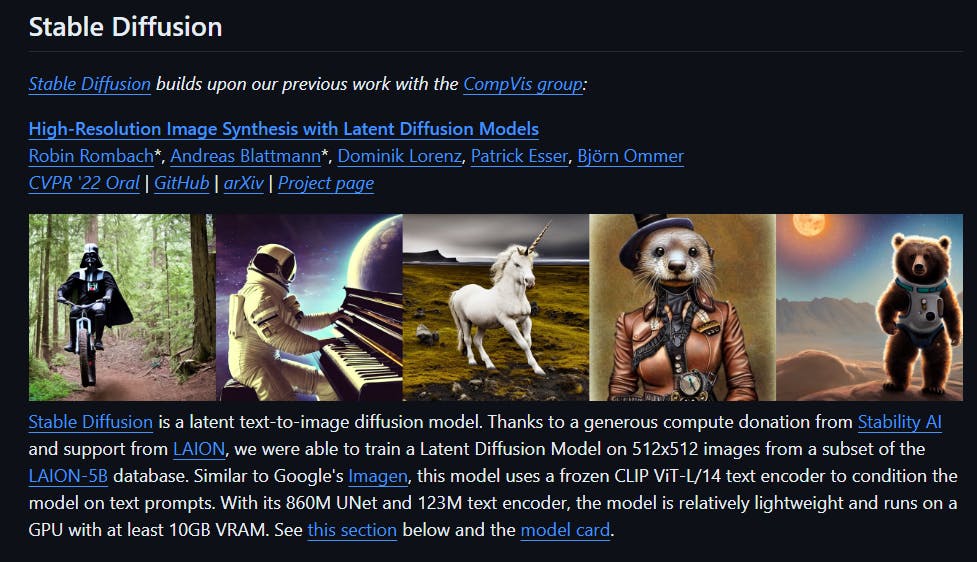

5. Stable broadcast v1-5

The Stable Diffusion v1-5 template is the ultimate solution for generating photorealistic images from any given text input. It is the most advanced model on the market, combining an autoencoder with a streaming model and has extensive training on the laion-aesthetics v2 5+ dataset. Additionally, it was fine-tuned to 595,000 steps at a resolution of 512 × 512 pixels.

Its ability to generate highly realistic images is unmatched by any other model. With the flexibility to generate images from a wide range of latent spaces, this model is not limited to a fixed set of text prompts. It was trained on a large image dataset, allowing it to have a deeper understanding of image characteristics, resulting in the most realistic image generation possible.

Stable Diffusion v1-5 is accessible in both the Diffusers library and the RunwayML GitHub repository. Check it out here.

6. StableStudio

StableStudio, the open source AI image generation tool, is the latest version of Stability AI and the ultimate successor to DreamStudio. Using stable streaming templates, users can generate AI images via text prompts for free and contribute to fixes, new features, and templates. Unlike DreamStudio, which was cloud-based, StableStudio is designed to offer more control and customization options, making it the perfect solution for local installation. Prebuilt binaries are available for easy installation, and billing and API key management features have been removed. With StableStudio, you have everything you need to generate AI images at your fingertips.

Click on here to access the model.

7. Summon AI

InvokeAI is the leading provider of stable delivery models for creative engines, offering cutting-edge AI-powered technologies to visual media production and design professionals, artists, and enthusiasts. Featuring a user-friendly interface and a range of advanced tools, including image-to-image translation, out-painting and in-painting, InvokeAI sets the standard for innovation in this field. It is easy to install and works seamlessly on Windows, Mac, and Linux systems with GPU cards as limited as 4 GB of RAM.

Developed by a network of open source developers, it is also available on GitHub. If you want to take your visual media to the next level, purchase the commercial version from the official InvokeAI website today. This version offers more advanced and customizable templates, making it the perfect choice for professionals looking to stay ahead of the curve.

Click on here to access the model.

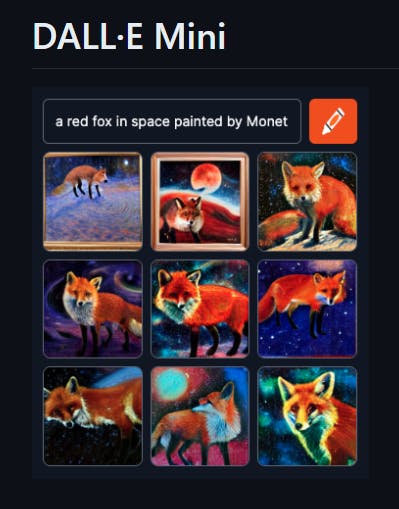

8. DALL-E mini

DALL-E Mini is an open source version of OpenAI's DALL-E that can convert text into photos or illustrations. With this incredible tool, you have the ability to host pre-trained models for text-to-image generation on your own servers, allowing you to use them for personal or commercial use.

While the model struggles with faces and heads, patterns, clothing, and objects look relatively good at low resolution. However, the creators of the DALL-E Mini are constantly working to improve its capabilities and now offer a paid online service called Craiyon, which uses a more advanced and larger version of the DALL-E Mini, known as the DALL-E Mega . With this service, you can expect better results and greater versatility.

Click on here to access the model.

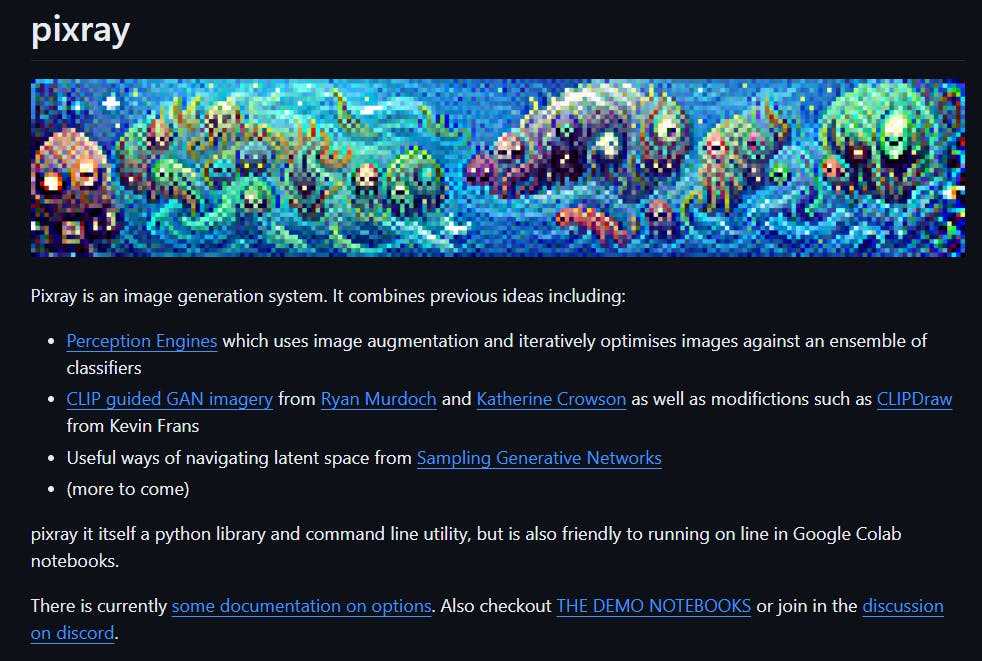

9. Pixray

Pixray is a cutting-edge image generation system that uses advanced techniques to create stunning images from text prompts. With an array of powerful features, including the ability to enter text prompts, select from a range of renderers (called drawers) such as clipdraw, line_sketch and pixel, and adjust formatting settings, Pixray offers a unrivaled flexibility and control.

The output section displays the generated images along with a time indicator showing how long it took to generate the images. To truly unlock the potential of Pixray, users can use additional resources such as GitHub, demo notebooks, Discord community, and documentation. With Pixray, you can create visually stunning images with ease and precision.

Click on here to access the model.

Common features

The models implemented in this process use base models or pre-trained transformers, which are large neural networks that have been trained on huge amounts of unlabeled data and can be used for various tasks using additional settings. Additionally, they use diffusion-based models, which are generative models that create images by gradually adding noise to an initial image and then reversing the process, producing high-resolution images with fine details and realistic textures .

Additionally, these models use basic inputs or spatial information, which are additional inputs that guide the generation process to adhere to the user-specified composition. These inputs can be bounding boxes, keypoints, or images, and can be used to control the layout, pose, or style of the generated image. They also use neural style transfer or adversarial networks, which are techniques that allow models to apply different artistic styles to the generated images, such as impressionism, cubism or abstract, resulting in Beautiful and unique artwork from text inputs.

Finally, these models use natural language queries or text prompts, which are the main inputs that guide image generation. These requests do not require knowledge or entry of code and can be simple or complex, descriptive or abstract, factual or fictitious. The models use what they learn from their training data to generate images that they believe match the requests.